Click here to go see the bonus panel!

Hovertext:

Once every few weeks, he wakes up in a cold sweat because he had a dream that used an inaccurate star map.

New comic!

Today's News:

Written with love.

Written with love.

Last full day to get BAHFest tickets!

Just 1.5 weeks until BAHFest Houston and tickets are selling fast!

For the record, I can list 17 digits of pi, but only because of the magic of Andrew Huang.

Happy Groundhog Day!

Last week I jokingly said that POPL papers must pass an ‘intellectual intimidation’ threshold in order to be accepted. That’s not true of course, but it is the case that programming languages papers can look especially intimidating to the practitioner (or indeed, the academic working in a different sub-discipline of computer science!). They are full of dense figures with mathematical symbols, and phrases thrown around such as “judgements”, “operational semantics”, and the like. There are many subtle variations in notation out there, but you can get a long way towards following the gist of a paper with an understanding of a few basics. So instead of covering a paper today, I thought I’d write a short practitioner’s guide to decoding programming languages papers. I’m following Pierce’s ‘Types and Programming Languages’ as my authority here.

Let’s start from a place of familiarity: syntax. The syntax tells us what sentences are legal (i.e., what programs we can write) in the language. The syntax comprises a set of terms, which are the building blocks. Taking the following example from Pierce:

t ::=

true

false

if t then t else t

0

succ t

pred t

iszero t

Wherever the symbol t appears we can substitute any term. When a paper is referring to terms, the letter is often used, many times with subscripts to differentiate between different terms (e.g.,

,

). The set of all terms is often denoted by

. This is not to be confused with

, traditionally used to represent types.

Notice in the above that if on it’s own isn’t a term (it’s a token, in this case, a keyword token). The term is the if-expression if t then t else t.

Terms are expressions which can evaluated, and the ultimate results of evaluation in a well-formed environment should be a value. Values are a subset of terms. In the above example the values are true, false, 0 together with the values that can be created by successive application of succ to 0 (succ 0, succ succ 0, ...).

We want to give some meaning to the terms in the language. This is the semantics. There are different ways of defining what programs mean. The two you’ll see referred to most often are operational semantics and denotational semantics. Of these, operational semantics is the most common, and it defines the meaning of a program by giving the rules for an abstract machine that evaluates terms. The specification takes the form of a set of evaluation rules — which we’ll look at in just a moment. The meaning of a term t, under this world view is the final state (the value) that the machine reaches when started with t as its initial state. In denotational semantics the meaning of a term is taken to be some mathematical object (e.g., a number or a function) in some preexisting semantic domain, and an interpretation function maps terms in the language to their equivalents in the target domain. (So we are specifying what each term represents, or denotes, in the domain).

You might also see authors refer to ‘small-step’ operational semantics and ‘big-step’ operational semantics. As the names imply, these refer to how big a leap the abstract machine makes with each rule that it applies. In small-step semantics terms are rewritten bit-by-bit, one small step at a time, until eventually they become values. In big-step semantics (aka ‘natural semantics’) we can go from a term to its final value in one step.

In small-step semantics notation, you’ll see something like this: , which should be read as

evaluates to

. (You’ll also see primes used instead of subscripts, e.g.

). This is also known as a judgement. The

represents a single evaluation step. If you see

then this means ‘by repeated application of single-step evaluations,

eventually evaluates to

’.

In big-step semantics a different arrow is used. So you’ll see to mean that term

evaluates to the final value

. If we had both small-step and big step semantics for the same language, then

and

both tell us the same thing.

Rules are by convention named in CAPS. And if you’re lucky the authors will prefix evaluation rules with ‘E-‘ as an extra aid to tell you what you’re looking at.

For example:

if true then t2 else t3 t2 (E-IFTRUE)

Most commonly, you’ll see evaluation rules specified in the inference rule style. For example (E-IF):

These should be read as “Given the things above the line, then we can conclude the thing below the line.” In this particular example therefore, “Given that evaluates to

then ‘

’ evaluates to ‘

’.”

A programming language doesn’t have to have a specified type system (it can be untyped), but most do.

A type system is a tractable syntactic method for proving the absence of certain program behaviours by classifying phrases according to the kinds of values they compute – Pierce, ‘Types and Programming Languages.’

The colon is used to indicate that a given term has a particular type. For example, . A term is well-typed (or typable) if there is some T such that

. Just as we had evaluation rules, we can have typing rules. These are also often specified in the inference rule style, and may have names beginning with ‘T-‘. For example (T-IF):

Which should be read as “Given that term 1 has type Bool, and term 2 and term 3 have type T, then the term ‘if term 1 then term 2 else term 3’ has type T”.

For functions (lambda abstractions) we also care about the type of the argument and the return value. We can annotate bound variables to specify their type, so that instead of just ‘’ we can write ‘

’. The type of a lambda abstraction (single argument function) is written

, meaning it takes an argument of type

and returns a result of type

.

You’ll see the turnstile operator, , a lot in typing inference rules. ‘P

Q’ should be read as “From P, we can conclude Q”, or “P entails Q”. For example, ‘

’ says “From the fact that x has type T1, it follows that term t2 has type T2.”

We need to keep track of variable bindings for the types of the free variables in a function. And to do that we use a typing context (aka typing environment). Think of it like the environment you’re familiar with that maps variable names to values, only here we’re mapping variable names to types. By convention Gamma () is the symbol used for the typing environment. It will often pop up in papers by convention with no explanation given. I remember what it represents by thinking of a gallows capturing the variables, with their types swinging from the rope. YMMV!! This leads to the three place relation you’ll frequently see, of the form

. It should be read, “From the typing context

it follows that term t has type T.” The comma operator extends

by adding a new binding on the right (e.g., ‘

’).

Putting it all together, you get rules that look like this one (for defining the type of a lambda abstraction in this case)

Let’s decode it: “If (the part above the line), from a typing context with x bound to T1 it follows that t2 has type T2, then (the part below the line) in the same typing context the expression has the type

.”

If Jane Austen were to have written a book about type systems, she’d probably have called it “Progress and Preservation.” (Actually, I think you could write lots of interesting essays under that title!). A type system is ‘safe’ (type safety) if well-typed terms always end up in a good place. In practice this means that when we’re following a chain of inference rules we never get stuck in a place where we don’t have a final value, but neither do we have any rules we can match to make further progress. (You’ll see authors referring to ‘not getting stuck’ – this is what they mean). To show that well-typed terms don’t get stuck, it suffices to prove progress and preservation theorems.

Sometimes you’ll see authors talking about progress and preservation without explicitly saying why they matter. Now you know!

A few other things you might come across that it’s interesting to be aware of:

I’m really just an interested outsider looking on at what’s happening in the community. If you’re reading this as a programming languages expert, and there are more things that should go into a practitioner’s crib sheet, please let us all know in the comments!

NASA has confirmed an amateur astronomer's claim that he made contact with the space agency's IMAGE satellite that had been missing for more than 12 years.

The IMAGE spacecraft launched back in March 2000 and was "unexpectedly lost" on Dec. 18, 2005.

The U.S. space agency said that it successfully collected data from the satellite Tuesday afternoon, Jan. 30 from its Johns Hopkins Applied Physics Lab in Maryland. NASA reports it has been able to read some "basic housekeeping data" from IMAGE, which suggests its "main control system is operational."

"Scientists and engineers at NASA's Goddard Space Flight Center in Greenbelt, Maryland, will continue to try to analyze the data from the spacecraft to learn more about the state of the spacecraft," the space agency says in the release.

CONFIRMED! The satellite re-discovered on Jan. 20 is IMAGE, a NASA mission we lost contact with in Dec. 2005! [?] [?] Full details: https://t.co/IrD4ruLedspic.twitter.com/zpI5lpXxOp

-- NASA Sun & Space (@NASASun) January 31, 2018

"This process will take a week or two to complete as it requires attempting to adapt old software and databases of information to more modern systems."

The astronomer who said he discovered the still broadcasting IMAGE, Scott Tilley, told Science Magazine he was looking for a classified U.S. satellite, but instead picked up the one labeled "2000-017A" which belongs to NASA's long-lost $150 million mission's spacecraft.

NASA said in its Wednesday release that it received a signal ID of 166, which is the ID for IMAGE.

The IMAGE spacecraft was originally launched to study the magnetosphere, which is the invisible magnetic field that surrounds the Earth. Science reports the mission was considered a success by NASA before it went missing.

"IMAGE was designed to image Earth's magnetosphere and produce the first comprehensive global images of the plasma populations in this region," NASA reports in the release.

"After successfully completing and extending its initial two-year mission in 2002, the satellite unexpectedly failed to make contact on a routine pass on Dec. 18, 2005. After a 2007 eclipse failed to induce a reboot, the mission was declared over."

IMAGE was lost when NASA couldn't "establish a routine communication" with the Deep Space Network. The space agency's official report on the failure cited an unexpected error within the power system as the reason for its disappearance.

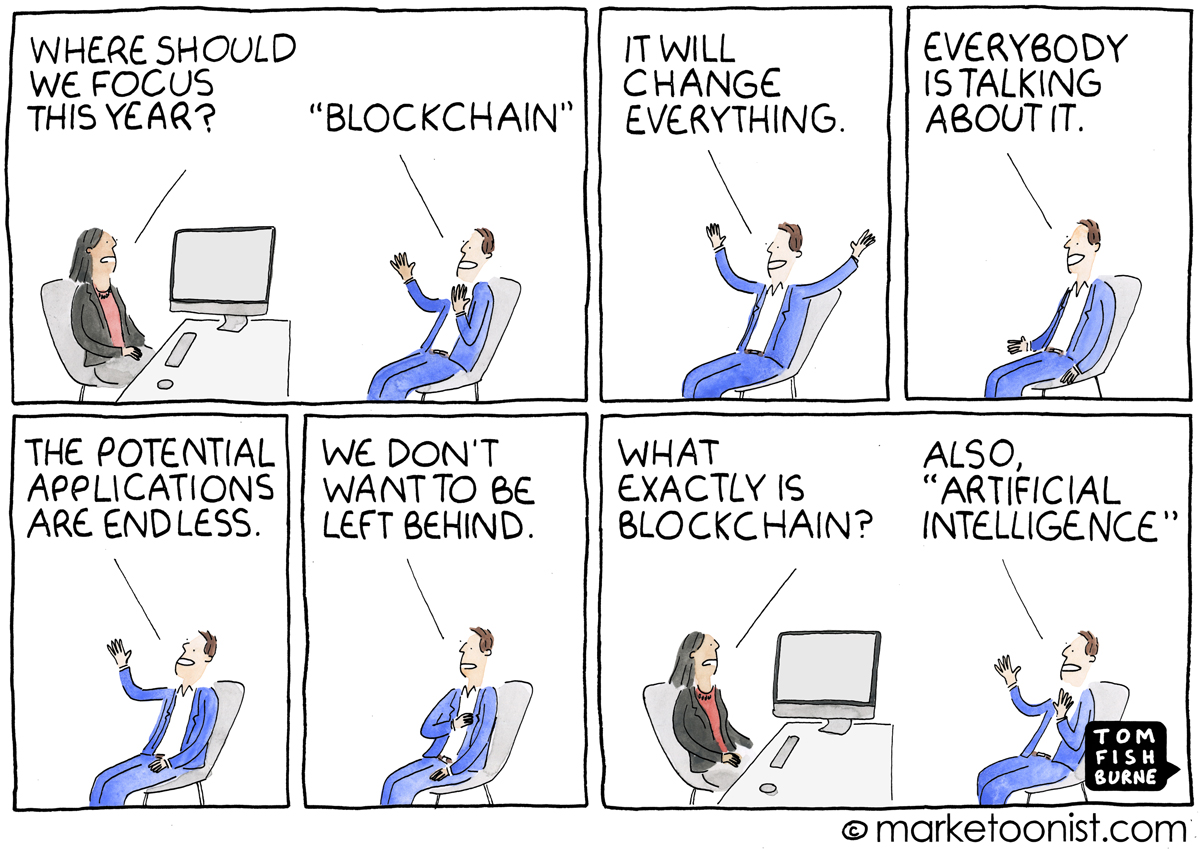

A few weeks ago, a publicly traded beverage company called Long Island Iced Tea Corp changed it’s name to Long Blockchain and announced that it was focusing on blockchain technology. Their stock surged 200% on the news.

Last week, Chanticleer Holdings, which owns burger restaurants and is a franchisee of Hooters, said that it was using blockchain for a new customer rewards program across its restaurants. Their stock shot up by 50%. As they put it:

“Use your Merit cryptocurrency mined by eating at Little Big Burger to get a buffalo chicken sandwich at American Burger Co., or trade them with your vegan friend so he can get a veggie burger at BGR. And that’s just the beginning.”

“Just the beginning” is right. Blockchain is suddenly on the radar of business and marketers are being asked, “what’s your blockchain strategy?” Blockchain is the shiny new thing of 2018.

I found an interesting blockchain resource for marketers, called “The CMO Primer for the Blockchain World” by Jeremy Epstein. It’s incredibly bullish and Jeremy himself admits that he may have “drunk too much Kool-Aid”. But it is a handy overview of some of the potential long-term use cases for marketers, including online ad verification, customer data protection, and product tracking. And I found the underlying metaphor of blockchain as a “trust machine” to be a useful lens.

I think marketers have a tendency to get so excited about shiny new things that we can lose sight of the fundamentals. In the long run, if we want to market iced tea and burgers, we can’t stray too far away from the iced tea and burgers.

Here are a couple other cartoons I drew about the shiny new thing:

“Shiny Object Syndrome“ May 2015

“The Emperor’s New Marketing Plan“ May 2017

Adam Victor Brandizzi"Sometimes, I... I program in weird languages..." *sobs*

Para esses dias de bloquinho, nada como um pudim de tapioca para repor a energia depois de cair na folia! E essa receita, que recebi do chef Carlos Ribeiro, é muito prática, nem vai ao fogo! Eu fazia uma versão com leite quente, mas virava um gororoba, rs. Essa é muito melhor. Vem pro bloco do pudim!

Disponha 500 gramas de tapioca em flocos em uma tigela.

Misture 1/2 litro de leite com 1 vidrinho pequeno de leite de coco e junte à tapioca. Deixe descansar por 2 horas em temperatura ambiente, até a tapioca absorver o líquido.

Junte 1 lata de leite condensado, 1 pacote pequeno de coco ralado (120 g), 2 colheres (sopa) de açúcar e misture.

Unte uma forma com um pouquinho de óleo e despeje a mistura. Leve à geladeira por 4 horas antes de desenformar.

Na hora de servir, finalize com leite condensado e coco ralado. Também vai bem com ameixas em calda, “como um manjar”, conta o chef Carlos Ribeiro, do restaurante Na Cozinha.

[All things that have been discussed here before, but some people wanted it all in a convenient place]

The most important study on the placebo effect is Hróbjartsson and Gøtzsche’s Is The Placebo Powerless?, updated three years later by a systematic review and seven years later with a Cochrane review. All three looked at studies comparing a real drug, a placebo drug, and no drug (by the third, over 200 such studies) – and, in general, found little benefit of the placebo drug over no drug at all. There were some possible minor placebo effects in a few isolated conditions – mostly pain – but overall H&G concluded that the placebo effect was clinically insignificant. Despite a few half-hearted tries, no one has been able to produce much evidence they’re wrong. This is kind of surprising, since everyone has been obsessing over placebos and saying they’re super-important for the past fifty years.

What happened? Probably placebo effects rode on the coattails of a more important issue, regression to the mean. That is, most sick people get better eventually. This is true both for diseases like colds that naturally go away, and for diseases like depression that come in episodes which remit for a few months or years until the next relapse. People go to the doctor during times of extreme crisis, when they’re most sick. So no matter what happens, most of them will probably get better pretty quickly.

In the very old days, nobody thought of this, so all their experiments were hopelessly confounded. Then people started adding placebo groups, this successfully controlled for not just placebo effect but regression to the mean, and so people noticed their studies were much better. They called the whole thing “placebo effect” when in fact there was no way to tell without further study how much was real placebo effect and how much was just regression to the mean. If we believe H&G, it’s pretty much all just regression to the mean, and placebo was a big red herring.

The rare exceptions are pain and a few other minor conditions. From H&G #3:

We found an effect on pain, SMD -0.28 (95% CI -0.36 to -0.19)); nausea, SMD -0.25 (-0.46 to -0.04)), asthma (-0.35 (-0.70 to -0.01)), and phobia (SMD -0.63 (95% CI -1.17 to -0.08)). The effect on pain was very variable, also among trials with low risk of bias. Four similarly-designed acupuncture trials conducted by an overlapping group of authors reported large effects (SMD -0.68 (-0.85 to -0.50)) whereas three other pain trials reported low or no effect (SMD -0.13 (-0.28 to 0.03)). The pooled effect on nausea was small, but consistent. The effects on phobia and asthma were very uncertain due to high risk of bias.

So the acupuncture trials seem to do pretty well. This probably isn’t because acupuncture works – some experiments have found sham acupuncture works equally well. It could be because acupuncture researchers have flexible research ethics. But Kamper & Williams speculate that acupuncture does well because it’s an optimized placebo. Normal placebos are just some boring little pill that researchers give because it’s the same shape as whatever they really want to give. Acupuncture – assuming that it doesn’t work – has been tailored over thousands of years to be as effective a pain-relieving placebo as possible. Maybe there’s some deep psychological reason why having needles in your skin intuitively feels like the sort of thing that should alleviate pain.

I want to add my own experience here, which is that occasionally I see extraordinary and obvious cases of the placebo effect. I once had a patient who was shaking from head to toe with anxiety tell me she felt completely better the moment she swallowed a pill, before there was any chance she could have absorbed the minutest fraction of it. You’re going to tell me “Oh, sure, but anxiety’s just in your head anyway” – but anxiety was one of the medical conditions that H&G included in their analysis. Plausibly they studied chronic anxiety, and pills are less good chronically than they are at aborting a specific anxiety attack the first time you take them. Or maybe her anxiety was somehow related to a phobia, one of the conditions H&G find some evidence in support of a placebo for. (Really? Phobia but not anxiety? Whatever.)

Surfing Uncertainty had the the best explanation of the placebo effect I’ve seen. Perceiving the world directly at every moment is too computationally intensive, so instead the brain guesses what the the world is like and uses perception to check and correct its guesses. In a high-bandwidth system like vision, guesses are corrected very quickly and you end up very accurate (except for weird things like ignoring when the word “the” is twice in a row, like it’s been several times in this paragraph already without you noticing). In a low-bandwidth system like pain perception, the original guess plays a pretty big role, with real perception only modulating it to a limited degree (consider phantom limb pain, where the brain guesses that an arm that isn’t there hurts, and nothing can convince it otherwise). Well, if you just saw a truck run over your foot, you have a pretty strong guess that you’re having foot pain. And if you just got a bunch of morphine, you have a pretty strong guess that your pain is better. The real sense-data can modulate it in a Bayesian way, but the sense-data is so noisy that it won’t be weighted highly enough to replace the guess completely.

If this is true, placebo should be strongest in subjective perceptions of conditions sent to the brain through low-bandwidth relays. That covers H&G’s pain and nausea. It doesn’t cover asthma and phobias quite as well, though I wonder if “asthma” is measured as subjective sensation of breathing difficulty.

What about depression? My gut would have told me depressed people respond very well to the placebo effect, but H&G say no.

I think that depressed mood may respond well to the placebo effect on a temporary basis – after all, mood seems noisy and low-bandwidth and hard to be sure of in the same way pain and nausea are. But most studies of depression use tests like the HAM-D, which measure the clinical syndrome of depression – things like sleep disturbance, appetite disturbance, and motor disturbance. These seem a lot less susceptible to subjective changes in the way the brain perceives things, so probably HAM-D based studies will show less placebo effect than just asking patients to subjectively assess their mood.