In the winter of 2011, a handful of software engineers landed in Boston just ahead of a crippling snowstorm. They were there as part of Code for America, a program that places idealistic young coders and designers in city halls across the country for a year. They'd planned to spend it building a new website for Boston's public schools, but within days of their arrival, the city all but shut down and the coders were stuck fielding calls in the city's snow emergency center.

In such snowstorms, firefighters can waste precious minutes finding and digging out hydrants. A city employee told the CFA team that the planning department had a list of street addresses for Boston's 13,000 hydrants. "We figured, 'Surely someone on the block with a shovel would volunteer if they knew where to look,'" says Erik Michaels-Ober, one of the CFA coders. So they got out their laptops.

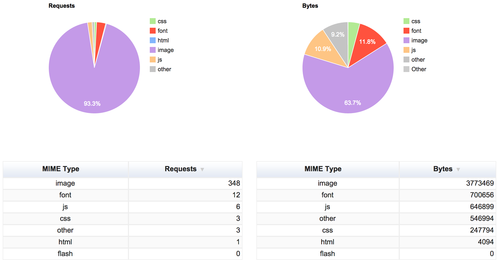

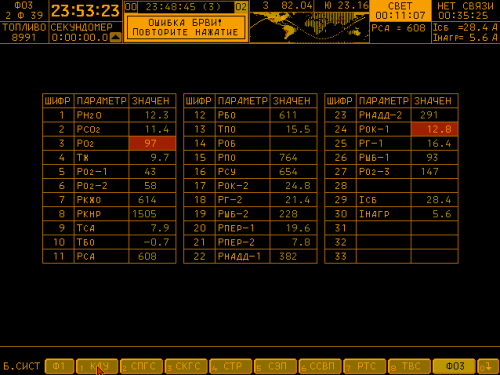

Screenshot from Adopt-a-Hydrant Code for America

Now, Boston has adoptahydrant.org, a simple website that lets residents "adopt" hydrants across the city. The site displays a map of little hydrant icons. Green ones have been claimed by someone willing to dig them out after a storm, red ones are still available—500 hydrants were adopted last winter.

Maybe that doesn't seem like a lot, but consider what the city pays to keep it running: $9 a month in hosting costs. "I figured that even if it only led to a few fire hydrants being shoveled out, that could be the difference between life or death in a fire, so it was worth doing," Michaels-Ober says. And because the CFA team open-sourced the code, meaning they made it freely available for anyone to copy and modify, other cities can adapt it for practically pennies. It has been deployed in Providence, Anchorage, and Chicago. A Honolulu city employee heard about Adopt-a-Hydrant after cutbacks slashed his budget, and now Honolulu has Adopt-a-Siren, where volunteers can sign up to check for dead batteries in tsunami sirens across the city. In Oakland, it's Adopt-a-Drain.

Sounds great, right? These simple software solutions could save lives, and they were cheap and quick to build. Unfortunately, most cities will never get a CFA team, and most can't afford to keep a stable of sophisticated programmers in their employ, either. For that matter, neither can many software companies in Silicon Valley; the talent wars have gotten so bad that even brand-name tech firms have been forced to offer employees a bonus of upwards of $10,000 if they help recruit an engineer.

In fact, even as the Department of Labor predicts the nation will add 1.2 million new computer-science-related jobs by 2022, we're graduating proportionately fewer computer science majors than we did in the 1980s, and the number of students signing up for Advanced Placement computer science has flatlined.

There's a whole host of complicated reasons why, from boring curricula to a lack of qualified teachers to the fact that in most states computer science doesn't count toward graduation requirements. But should we worry? After all, anyone can learn to code after taking a few fun, interactive lessons at sites like Codecademy, as a flurry of articles in everything from TechCrunch to Slate have claimed. (Michael Bloomberg pledged to enroll at Codecademy in 2012.) Twelve million people have watched a video from Code.org in which celebrities like NBA All-Star Chris Bosh and will.i.am pledged to spend an hour learning code, a notion endorsed by President Obama, who urged the nation: "Don't just play on your phone—program it."

So you might be forgiven for thinking that learning code is a short, breezy ride to a lush startup job with a foosball table and free kombucha, especially given all the hype about billion-dollar companies launched by self-taught wunderkinds (with nary a mention of the private tutors and coding camps that helped some of them get there). The truth is, code—if what we're talking about is the chops you'd need to qualify for a programmer job—is hard, and lots of people would find those jobs tedious and boring.

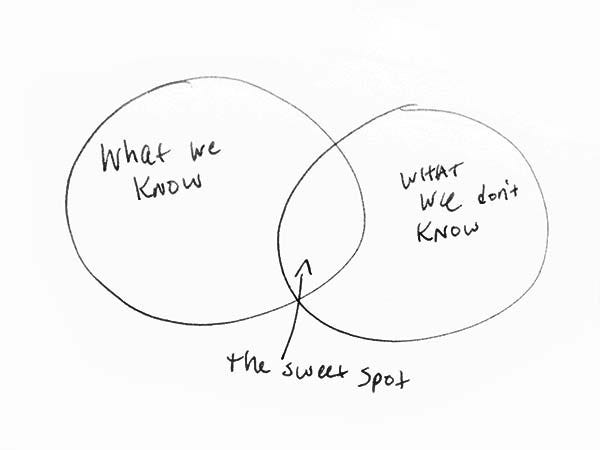

But let's back up a step: What if learning to code weren't actually the most important thing? It turns out that rather than increasing the number of kids who can crank out thousands of lines of JavaScript, we first need to boost the number who understand what code can do. As the cities that have hosted Code for America teams will tell you, the greatest contribution the young programmers bring isn't the software they write. It's the way they think. It's a principle called "computational thinking," and knowing all of the Java syntax in the world won't help if you can't think of good ways to apply it.

Unfortunately, the way computer science is currently taught in high school tends to throw students into the programming deep end, reinforcing the notion that code is just for coders, not artists or doctors or librarians. But there is good news: Researchers have been experimenting with new ways of teaching computer science, with intriguing results. For one thing, they've seen that leading with computational thinking instead of code itself, and helping students imagine how being computer savvy could help them in any career, boosts the number of girls and kids of color taking—and sticking with—computer science. Upending our notions of what it means to interface with computers could help democratize the biggest engine of wealth since the Industrial Revolution.

So what is computational thinking? If you've ever improvised dinner, pat yourself on the back: You've engaged in some light CT.

There are those who open the pantry to find a dusty bag of legumes and some sad-looking onions and think, "Lentil soup!" and those who think, "Chinese takeout." A practiced home cook can mentally sketch the path from raw ingredients to a hot meal, imagining how to substitute, divide, merge, apply external processes (heat, stirring), and so on until she achieves her end. Where the rest of us see a dead end, she sees the potential for something new.

If seeing the culinary potential in raw ingredients is like computational thinking, you might think of a software algorithm as a kind of recipe: a step-by-step guide on how to take a bunch of random ingredients and start layering them together in certain quantities, for certain amounts of time, until they produce the outcome you had in mind.

Like a good algorithm, a good recipe follows some basic principles. Ingredients are listed first, so you can collect them before you start, and there's some logic in the way they are listed: olive oil before cumin because it goes in the pan first. Steps are presented in order, not a random jumble, with staggered tasks so that you're chopping veggies while waiting for water to boil. A good recipe spells out precisely what size of dice or temperature you're aiming for. It tells you to look for signs that things are working correctly at each stage—the custard should coat the back of a spoon. Opportunities for customization are marked—use twice the milk for a creamier texture—but if any ingredients are absolutely crucial, the recipe makes sure you know it. If you need to do something over and over—add four eggs, one at a time, beating after each—those tasks are boiled down to one simple instruction.

Much like cooking, computational thinking begins with a feat of imagination, the ability to envision how digitized information—ticket sales, customer addresses, the temperature in your fridge, the sequence of events to start a car engine, anything that can be sorted, counted, or tracked—could be combined and changed into something new by applying various computational techniques. From there, it's all about "decomposing" big tasks into a logical series of smaller steps, just like a recipe.

Those techniques include a lot of testing along the way to make sure things are working. The culinary principle of mise en place is akin to the computational principle of sorting: organize your data first, and you'll cut down on search time later. Abstraction is like the concept of "mother sauces" in French cooking (béchamel, tomato, hollandaise), building blocks to develop and reuse in hundreds of dishes. There's iteration: running a process over and over until you get a desired result. The principle of parallel processing makes use of all available downtime (think: making the salad while the roast is cooking). Like a good recipe, good software is really clear about what you can tweak and what you can't. It's explicit. Computers don't get nuance; they need everything spelled out for them.

Put another way: Not every cook is a David Chang, not every writer is a Jane Austen, and not every computational thinker is a Guido van Rossum, the inventor of the influential Python programming language. But just as knowing how to scramble an egg or write an email makes life easier, so too will a grasp of computational thinking. Yet the "learn to code!" camp may have set people on the uphill path of mastering C++ syntax instead of encouraging all of us to think a little more computationally.

The happy truth is, if you get the fundamentals about how computers think, and how humans can talk to them in a language the machines understand, you can imagine a project that a computer could do, and discuss it in a way that will make sense to an actual programmer. Because as programmers will tell you, the building part is often not the hardest part: It's figuring out what to build. "Unless you can think about the ways computers can solve problems, you can't even know how to ask the questions that need to be answered," says Annette Vee, a University of Pittsburgh professor who studies the spread of computer science literacy.

Indeed, some powerful computational solutions take just a few lines of code—or no code at all. Consider this lo-fi example: In 1854, a London physician named John Snow helped squelch a cholera outbreak that had killed 616 residents. Brushing aside the prevailing theory of the disease—deadly miasma—he surveyed relatives of the dead about their daily routines. A map he made connected the disease to drinking habits: tall stacks of black lines, each representing a death, grew around a water pump on Broad Street in Soho that happened to be near a leaking cesspool. His theory: The disease was in the water. Classic principles of computational thinking came into play here, including merging two datasets to reveal something new (locations of deaths plus locations of water pumps), running the same process over and over and testing the results, and pattern recognition. The pump was closed, and the outbreak subsided.

Or take Adopt-a-Hydrant. Under the hood, it isn't a terribly sophisticated piece of software. What's ingenious is simply that someone knew enough to say: Here's a database of hydrant locations, here is a universe of people willing to help, let's match them up. The computational approach is rooted in seeing the world as a series of puzzles, ones you can break down into smaller chunks and solve bit by bit through logic and deductive reasoning. That's why Jeannette Wing, a VP of research at Microsoft who popularized the term "computational thinking," says it's a shame to think CT is just for programmers. "Computational thinking involves solving problems, designing systems, and understanding human behavior," she writes in a publication of the Association for Computing Machinery. Those are handy skills for everybody, not just computer scientists.

In other words, computational thinking opens doors. For while it may seem premature to claim that today every kid needs to code, it's clear that they're increasingly surrounded by opportunities to code—opportunities that the children of the privileged are already seizing. The parents of Facebook founder Mark Zuckerberg got him a private computer tutor when he was in middle school. Last year, 13,000 people chipped in more than $600,000 via Kickstarter for their own limited-edition copy of Robot Turtles, a board game that teaches programming basics to kids as young as three. There are plenty of free, kid-oriented code-learning sites—like Scratch, a programming language for children developed at MIT—but parents and kids in places like San Francisco or Austin are more likely to know they exist.

Computer scientists have been warning for decades that understanding code will one day be as essential as reading and writing. If they're right, understanding the importance of computational thinking can't be limited to the elite, not if we want some semblance of a democratic society. Self-taught auteurs will always be part of the equation, but to produce tech-savvy citizens "at scale," to borrow an industry term, the heavy lifting will happen in public school classrooms. Increasingly, to have a good shot at a good job, you'll need to be code literate.

Upending our notions of what it means to interface with computers could help democratize the biggest engine of wealth since the Industrial Revolution.

"Code literate." Sounds nice, but what does it mean? And where does literacy end and fluency begin? The best way to think about that is to look to the history of literacy itself.

Reading and writing have become what researchers have called "interiorized" or "infrastructural," a technology baked so deeply into everyday human life that we're never surprised to encounter it. It's the main medium through which we connect, via not only books and papers, but text messages and the voting booth, medical forms and shopping sites. If a child makes it to adulthood without being able to read or write, we call that a societal failure.

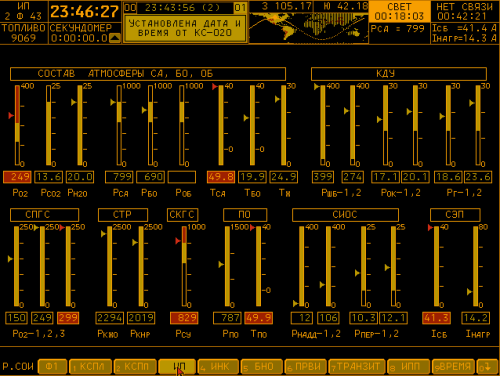

Yet for thousands of years writing was the preserve of the professional scribes employed by the elite. So what moved it to the masses? In Europe at least, writes literacy researcher Vee, the tipping point was the Domesday Book, an 11th-century survey of landowners that's been called the oldest public record in England.

A page from the Domesday Book National Archives, UK

Commissioned by William the Conqueror to take stock of what his new subjects held in terms of acreage, tenants, and livestock so as to better tax them, royal scribes fanned across the countryside taking detailed notes during in-person interviews. It was like a hands-on demo on the efficiencies of writing, and it proved contagious. Despite skepticism—writing was hard, and maybe involved black magic—other institutions started putting it to use. Landowners and vendors required patrons and clients to sign deeds and receipts, with an "X" if nothing else. Written records became admissible in court. Especially once Johannes Gutenberg invented the printing press, writing seeped into more and more aspects of life, no longer a rarefied skill restricted to a cloistered class of aloof scribes but a function of everyday society.

Fast forward to 19th-century America, and it'd be impossible to walk down a street without being bombarded with written information, from newspapers to street signs to store displays; in the homes of everyday people, personal letters and account ledgers could be found. "The technology of writing became infrastructural," Vee writes in her paper "Understanding Computer Programming As a Literacy." "Those who could not read text began to be recast as 'illiterate' and power began to shift towards those who could." Teaching children how to read became a civic and moral imperative. Literacy rates soared over the next century, fostered through religious campaigns, the nascent public school system, and the at-home labor of many mothers.

Of course, not everyone was invited in immediately: Illiteracy among women, slaves, and people of color was often outright encouraged, sometimes even legally mandated. But today, while only some consider themselves to be "writers," practically everybody reads and writes every day. It's hard to imagine there was ever widespread resistance to universal literacy.

So how does the history of computing compare? Once again, says Vee, it starts with a census. In 1880, a Census Bureau statistician, Herman Hollerith, saw that the system of collecting and sorting surveys by hand was buckling under the weight of a growing population. He devised an electric tabulating machine, and generations of these "Hollerith machines" were used by the bureau until the 1950s, when the first commercial mainframe, the UNIVAC, was developed with a government research grant. "The first successful civilian computer," it was a revolution in computing technology: Unlike the "dumb" Hollerith machine and its cousins, which ran on punch cards, vacuum tubes, and other mechanical inputs that had to be manually entered over and over again, the UNIVAC had memory. It could store instructions, in the form of programs, and remember earlier calculations for later use.1

1 The evolution of communication technologies has always been an issue of memory. For thousands of years, the oral tradition had enough storage space to house the expanse of human records and information. As communities got bigger, oral tradition started maxing out. So a new technology sprang up, one that could distill thought into a series of symbolic scratches that could be packaged up, transported, and recompiled by the user into language and thought. But while books have immensely greater RAM than a song poem, a computer offers exponentially more capacity than either of these.

Once the UNIVAC was unveiled, research institutions and the private sector began clamoring for mainframes of their own. The scribes of the computer age, the early programmers who had worked on the first large-scale computing projects for the government during the war, took jobs at places like Bell Labs, the airline industry, banks, and research universities. "The spread of the texts from the central government to the provinces is echoed in the way that the programmers who cut their teeth on major government-funded software projects then circulated out into smaller industries, disseminating their knowledge of code writing further," Vee writes. Just as England had gone from oral tradition to written record after the Domesday Book, the United States in the 1960s and '70s shifted from written to computational record.

The 1980s made computers personal, and today it's impossible not to engage in conversations powered by code, albeit code that's hidden beneath the interfaces of our devices. But therein lies a new problem: The easy interface creates confusion around what it means to be "computer literate." Interacting with an app is very different from making or tweaking or understanding one, and opportunities to do the latter remain the province of a specialized elite. In many ways, we're still in the "scribal stage" of the computer age.

But the tricky thing about literacy, Vee says, is that it begets more literacy. It happened with writing: At first, laypeople could get by signing their names with an "X." But the more people used reading and writing, the more was required of them.

We can already see code leaking into seemingly far-removed fields. Hospital specialists collect data from the heartbeat monitors of day-old infants, and run algorithms to spot babies likely to have respiratory failure. Netflix is a gigantic experiment in statistical machine learning. Legislators are being challenged to understand encryption and relational databases during hearings on the NSA.

The most exciting advances in most scientific and technical fields already involve big datasets, powerful algorithms, and people who know how to work with both. But that's increasingly true in almost any profession. English literature and computer science researchers fed Agatha Christie's oeuvre into a computer, ran a textual-analysis program, and discovered that her vocabulary shrank significantly in her final books. They drew from the work of brain researchers and put forth a new hypothesis: Christie suffered from Alzheimer's. "More and more, no matter what you're interested in, being computationally savvy will allow you to do a better job," says Jan Cuny, a leading CS researcher at the National Science Foundation (NSF).

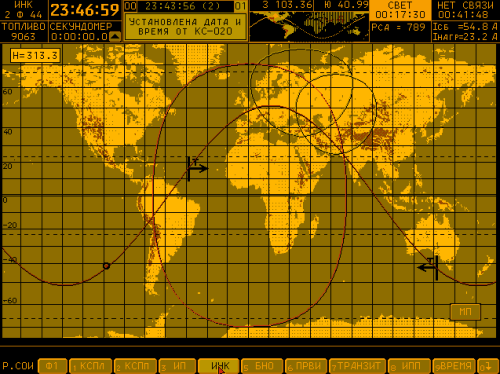

Grace Hopper led the team that developed the UNIVAC, the first commercial computer. Smithsonian Institution

It may be hard to swallow the idea that coding could ever be an everyday activity on par with reading and writing in part because it looks so foreign (what's with all the semicolons and carets)? But remember that it took hundreds of years to settle on the writing conventions we take for granted today: Early spellings of words—Whan that Aprille with his shoures soote—can seem as foreign to modern readers as today's code snippets do to nonprogrammers. Compared to the thousands of years writing has had to go from notched sticks to glossy magazines, digital technology has, in 60 years, evolved exponentially faster.

Our elementary-school language arts teachers didn't drill the alphabet into our brains anticipating Facebook or WhatsApp or any of the new ways we now interact with written material. Similarly, exposing today's third-graders to a dose of code may mean that at 30 they retain enough to ask the right questions of a programmer, working in a language they've never seen on a project they could never have imagined.

To produce tech-savvy citizens "at scale," to borrow an industry term, the heavy lifting will happen in public school classrooms

One day last year, Neil Fraser, a young software engineer at Google, showed up unannounced at a primary school in the coastal Vietnamese city of Da Nang. Did the school have computer classes, he wanted to know, and could he sit in? A school official glanced at Fraser's Google business card and led him into a classroom of fifth-graders paired up at PCs while a teacher looked on. What Fraser saw on their screens came as a bit of a shock.

Fraser, who was in Da Nang visiting his girlfriend's family, works in Google's education department in Mountain View, teaching JavaScript to new recruits. His interest in computer science education often takes him to high schools around the Bay Area, where he tells students that code is fun and interesting, and learning it can open doors after graduation.

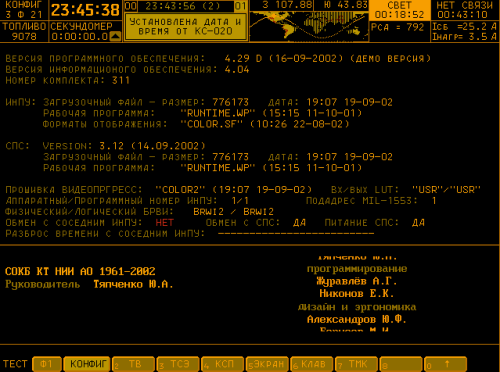

The fifth-graders in Da Nang were doing exercises in Logo, a simple program developed at MIT in the 1970s to introduce children to programming. A turtle-shaped avatar blinked on their screens and the kids fed it simple commands in Logo's language, making it move around, leaving a colored trail behind. Stars, hexagons, and ovals bloomed on the monitors.

Simple commands in Logo. MIT Media Lab

Fraser, who learned Logo when the program was briefly popular in American elementary schools, recognized the exercise. It was a lesson in loops, a bedrock programming concept in which you tell the machine to do the same thing over and over again, until you get a desired result. "A quick comparison with the United States is in order," Fraser wrote later in a blog post. At Galileo Academy, San Francisco's magnet school for science and technology, he'd found juniors in a computer science class struggling with the concept of loops. The fifth-graders in Da Nang had outpaced upperclassmen at one of the Bay Area's most tech-savvy high schools.

Another visit to an 11th-grade classroom in Ho Chi Minh City revealed students coding their way through a logic puzzle embedded in a digital maze. "After returning to the US, I asked a senior engineer how he'd rank this question on a Google interview," Fraser wrote. "Without knowing the source of the question, he judged that this would be in the top third."

Early code education isn't just happening in Vietnamese schools. Estonia, the birthplace of Skype, rolled out a countrywide programming-centric curriculum for students as young as six in 2012. In September, the United Kingdom will launch a mandatory computing syllabus for all students ages 5 to 16.

Meanwhile, even as US enrollment in almost all other STEM (science, technology, engineering, and math) fields has grown over the last 20 years, computer science has actually lost students, dropping from 25 percent of high school students earning credits in computer science to only 19 percent by 2009, according to the National Center for Education Statistics.

"Our kids are competing with kids from countries that have made computer science education a No. 1 priority," says Chris Stephenson, the former head of the Computer Science Teachers Association (CSTA). Unlike countries with federally mandated curricula, in the United States computer lesson plans can vary widely between states and even between schools in the same district. "It's almost like you have to go one school at a time," Stephenson says. In fact, currently only 20 states and Washington, DC, allow computer science to count toward core graduation requirements in math or science, and not one requires students to take a computer science course to graduate. Nor do the new Common Core standards, a push to make K-12 curricula more uniform across states, include computer science requirements.

It's no surprise, then, that the AP computer science course is among the College Board's least popular offerings; last year, almost four times more students tested in geography (114,000) than computer science (31,000). And most kids don't even get to make that choice; only 17 percent of US high schools that have advanced placement courses do so in CS. It was 20 percent in 2005.

For those who do take an AP computer science class—a yearlong course in Java, which is sort of like teaching cooking by showing how to assemble a KitchenAid—it won't count toward core graduation requirements in most states. What's more, many counselors see AP CS as a potential GPA ding, and urge students to load up on known quantities like AP English or US history. "High school kids are overloaded already," says Joanna Goode, a leading researcher at the University of Oregon's education department, and making time for courses that don't count toward anything is a hard sell.

In any case, it's hard to find anyone to teach these classes. Unlike fields such as English and chemistry, there isn't a standard path for aspiring CS teachers in grad school or continuing education programs. And thanks to wildly inconsistent certification rules between states, certified CS teachers can get stuck teaching math or library sciences if they move. Meanwhile, software whizzes often find the lure of the startup salary much stronger than the call of the classroom, and anyone who tires of Silicon Valley might find that its "move fast and break things" mantra doesn't transfer neatly to pedagogy.

And while many kids have mad skills in movie editing or Photoshopping, such talents can lull parents into thinking they're learning real computing. "We teach our kids how to be consumers of technology, not creators of technology," notes the NSF's Cuny.

Or, as Cory Doctorow, an editor of the technology-focused blog Boing Boing, put it in a manifesto titled "Why I Won't Buy an iPad": "Buying an iPad for your kids isn't a means of jump-starting the realization that the world is yours to take apart and reassemble; it's a way of telling your offspring that even changing the batteries is something you have to leave to the professionals."

But school administrators know that gleaming banks of shiny new machines go a long way in impressing parents and school boards. Last summer, the Los Angeles Unified School District set aside a billion dollars to buy an iPad for all 640,000 children in the district. To pay for the program, the district dipped into school construction bonds. Still, some parents and principals told the Los Angeles Times they were thrilled about it. "It gives us the sense of hope that these kids are being looked after," said one parent.2

2 The kids did quickly learn to hack their iPads, so there's some hope for actual inventiveness.

Sure, some schools are woefully behind on the hardware equation, but according to a 2010 federal study, only 3 percent of teachers nationwide lacked daily access to a computer in their classroom, and the nationwide ratio of students to school computers was a little more than 5-to-1. As to whether kids have computers at home—that doesn't seem to make much difference in overall performance, either. A study from the National Bureau of Economic Research reviewed the grades, test scores, homework, and attendance of California 6th- to 10th-graders who were randomly given computers to use at home for the first time. A year later, the study found, nothing happened. Test scores, grades, disciplinary actions, time spent on homework: None of it went up or down—except the kids did log a lot more time playing games.

We're still in the "scribal stage" of the computer age, where skills are in the hands of an elite.

One sunny morning last summer, 40 Los Angeles teachers sat in a warm classroom at UCLA playing with crayons, flash cards, and Legos. They were students again for a week, at a workshop on how to teach computer science. Which meant that first they had to learn computer science.

The lesson was in binary numbers, or how to write any number using just two digits. "Computers can only talk in ones and zeros," explained the instructor, a fellow teacher who'd taken the same course. The course is funded by the National Science Foundation, and so is the experimental new blueprint it trains teachers to use, called Exploring Computer Science (ECS). "You gotta talk to them in their language."

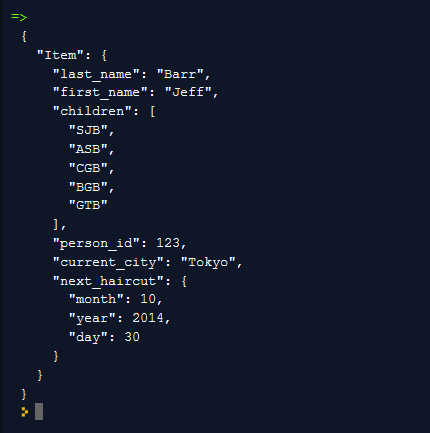

Made sense at first, but when it came to turning the number 1,250 into binary, the class started falling apart. At one table, two female teachers politely endured a long, wrong explanation from an older male colleague. A teacher behind them mumbled, "I don't get it," pushed his flash cards away, and counted the minutes to lunchtime. A table of guys in their 30s was loudly sprinting toward an answer, and a minute later the bearded white guy at the head of their table, i.e., the one most resembling a classic programmer, shot his hand up with the answer and an explanation of how he got there: "Basically what you do is, you just turn it into an algorithm." Blank stares suggested few colleagues knew what an algorithm was—in this case a simple, step-by-step process for turning a number into binary. (The answer, if you're curious, is 010011100010.)

This lesson—which by the end of the day clicked for most in the class—might seem like most people's image of CS, but the course these teachers are learning to teach couldn't look more different from classic AP computer science. Much of what's taught in ECS is about the why of computer science, not just the how. There are discussions and writing assignments on everything from personal privacy in the age of Big Data to the ethics of robot labor to how data analysis could help curb problems like school bullying. Instead of rote Java learning, it offers lots of logic games and puzzles that put the focus on computing, not computers. In fact, students hardly touch a computer for the first 12 weeks.

"Our curriculum doesn't lead with programming or code," says Jane Margolis, a senior researcher at UCLA who helped design the ECS curriculum and whose book Stuck in the Shallow End: Education, Race, and Computing provides much of the theory behind the lesson plans. "There are so many stereotypes associated with coding, and often it doesn't give the broader picture of what the field is about. The research shows you want to contextualize, show how computer science is relevant to their lives." ECS lessons ask students to imagine how they'd make use of various algorithms as a chef, or a carpenter, or a teacher, how they could analyze their own snack habits to eat better, and how their city council could use data to create cleaner, safer streets.

The ECS curriculum is now offered to 2,400 students at 31 Los Angeles public high schools and a smattering of schools in other cities, notably Chicago and Washington, DC. Before writing it, Margolis and fellow researchers spent three years visiting schools across the Los Angeles area—overcrowded urban ones and plush suburban ones—to understand why few girls and students of color were taking computer science. At a tony school in West LA that the researchers dubbed "Canyon Charter High," they noticed students of color traveling long distances to get to school, meaning they couldn't stick around for techie extracurriculars or to simply hang out with like-minded students.

Equally daunting were the stereotypes. Take Janet, the sole black girl in Canyon's AP computer science class, who told the researchers she signed up for the course in part "because we [African American females] were so limited in the world, you know, and just being able to be in a class where I can represent who I am and my culture was really important to me." When she had a hard time keeping up—like most kids in the class—the teacher, a former software developer who, researchers noted, tended to let a few white boys monopolize her attention, pulled Janet aside and suggested she drop the class, explaining that when it comes to computational skills, you either "have it or don't have it."

Research shows that girls tend to pull away from STEM subjects—including computer science—around middle school, while rates of boys in these classes stay steady. Fortunately, says Margolis, there's evidence that tweaking the way computer science is introduced can make a difference. A 2009 study tested various messages about computer science with college-bound teens. It found that explaining how programming skills can be used to "do good"—connect with one's community, make a difference on big social problems like pollution and health care—reverberated strongly with girls. Far less successful were messages about getting a good job or being "in the driver's seat" of technological innovation—i.e., the dominant cultural narratives about why anyone would learn to code.

"For me, computer science can be used to implement social change," says Kim Merino, a self-described "social-justice-obsessed queer Latina nerd history teacher" who decided to take the ECS training a couple of years ago. Now, she teaches the class to middle and high schoolers at the UCLA Community School, an experimental new public K-12 school. "I saw this as a new frontier in the social-justice fight," she says. "I tell my students, 'I don't necessarily want to teach you how to get rich. I want to teach you to be a good citizen.'"

Merino's father was an aerospace engineer for Lockheed Martin. So you might think adapting to CS would be easy for her. Not quite. Most of the teachers she trained with were men. "Out of seven women, there were two of color. Honestly, I was so scared. But now, I take that to my classroom. At this point my class is half girls, mostly Latina and Korean, and they still come into my class all nervous and intimidated. My job is to get them past all of that, get them excited about all the things they could do in their lives with programming."

Merino has spent the last four years teaching kids of color growing up in inner cities to imagine what they could do with programming—not as a replacement for, but as part of their dreams of growing up to be doctors or painters or social workers. But Merino's partner's gentle ribbings about how they'd ever start a family on a teacher's salary eventually became less gentle. She just took a job as director of professional development at CodeHS, an educational startup in San Francisco.

"We teach our kids how to be consumers of technology, not creators of technology."

It was a little more than a century ago that literacy became universal in Western Europe and the United States. If computational skills are on the same trajectory, how much are we hurting our economy—and our democracy—by not moving faster to make them universal?

There's the talent squeeze, for one thing. Going by the number of computer science majors graduating each year, we're producing less than half of the talent needed to fill the Labor Department's job projections. Women currently make up 20 percent of the software workforce, blacks and Latinos around 5 percent each. Getting more of them in the computing pipeline is simply good business sense.

It would also create a future for computing that more accurately reflects its past. A female mathematician named Ada Lovelace wrote the first algorithm ever intended to be executed on a machine in 1843. The term "programmer" was used during World War II to describe the women who worked on the world's first large-scale electronic computer, the ENIAC machine, which used calculus to come up with tables to improve artillery accuracy 3. In 1949, Rear Adm. Grace Hopper helped develop the UNIVAC, the first general-purpose computer, a.k.a. a mainframe, and in 1959 her work led to the development of COBOL, the first programming language written for commercial use.

Excluding huge swaths of the population also means prematurely killing off untold ideas and innovations that could make everyone's lives better. Because while the rash of meal delivery and dating apps designed by today's mostly young, male, urban programmers are no doubt useful, a broader base of talent might produce more for society than a frictionless Saturday night. 4

3 Six "ENIAC girls" did most of the programming, but until recently their work was all but forgotten. Male engineers worked on ENIAC's hardware, reflecting that until the 1950s, coding was considered clerical—even though it always involved higher math and applied logic. It was recast as a masculine pursuit as projects like Grace Hopper's UNIVAC demonstrated its promise.

And there's evidence that diverse teams produce better products. A study of 200,000 IT patents found that "patents invented by mixed-gender teams are cited [by other inventors] more often than patents invented by female-only or male-only" teams. The authors suggest one possibility for this finding may be "that gender diversity leads to more innovative research and discovery." (Similarly, research papers across the sciences that are coauthored by racially diverse teams are more likely to be cited by other researchers than those of all-white teams.)

Fortunately, there's evidence that girls exposed to very basic programming concepts early in life are more likely to major in computer science in college. That's why approaches like Margolis' ECS course, steeped in research on how to get and keep girls and other underrepresented minorities in computer science class, as well as groups like Black Girls Code, which offers affordable code boot camps to school-age girls in places like Detroit and Memphis, may prove appealing to the industry at large.

4 For example, Janet Emerson Bashen was the first black woman to receive a software patent, in 2006, for an app to better process Equal Employment Opportunity claims.

"Computer science innovation is changing our entire lives, from the professional to the personal, even our free time," Margolis says. "I want a whole diversity of people sitting at the design table, bringing different sensibilities and values and experiences to this innovation. Asking, 'Is this good for this world? Not good for the world? What are the implications going to be?'"

We make kids learn about biology, literature, history, and geometry with the promise that navigating the wider world will be easier for their efforts. It'll be harder and harder not to include computing on that list. Decisions made by a narrow demographic of technocrat elites are already shaping their lives, from privacy and social currency, to career choices and how they spend their free time.

Black Girls Code has introduced more than 1,500 girls to programming. Black Girls Code

Margolis' program and others like it are a good start toward spreading computational literacy, but they need a tremendous amount of help to scale up to the point where it's not such a notable loss when a teacher like Kim Merino leaves the profession. What's needed to make that happen is for people who may never learn a lick of code themselves to help shape the tech revolution the old-fashioned way, through educational reform and funding for schools and volunteer literacy crusades. Otherwise, we're all doomed—well, most of us, anyway—to be stuck in the Dark Ages.

Illustration by Charis Tsevis. Web production by Tasneem Raja.

The static screenshot doesn't do this justice. Click the link!

The static screenshot doesn't do this justice. Click the link!