Recently the author of xkcd, Randall Munroe, was asked the question of how long it would be necessary for someone to fall in order to jump out of an airplane, fill a large balloon with helium while falling, and land safely. Randall unfortunately ran into some difficulties with completing his calculation, including getting his IP address banned by Wolfram|Alpha. (No worries: we received his request and have already fixed that.)

While I don’t pretend to give as rigorous a calculation as he attempted, let’s see if we can’t come up with a rough answer of our own.

The first things to determine are the initial and final weights for the person, the balloon, the helium, and, very importantly, the helium tanks that the person carries.

The average weight of a human being is 62 kilograms. Let’s round this up to 70 kilograms to account for clothing as well as whatever safety gear and harnesses are necessary.

The weight of the balloon depends on how much helium we’re using. We’re going to assume for the purposes of this calculation that we want to achieve neutral buoyancy for the balloon and the person (we will presume that the tanks are jettisoned once the balloon is full).

We’ll also assume we’re using some sort of modified weather balloon. The larger weather balloons can weigh 600 grams with an inflated diameter of 177 centimeters. Assuming a roughly spherical balloon, this yields a surface area of 98,423 square centimeters, which gives a surface density of 0.00609614 grams per square centimeter.

The buoyancy of the balloon and person is equal to the difference between the mass of the air displaced and the mass of the helium inside the balloon as well as the balloon itself and the person. We want these to balance out, so we need to solve this equation:

where  refers to the density of the gas where the subscript indicates “Air” or helium, r is the radius of the balloon, sballoon refers to the surface density of the balloon calculated above, and mperson is the mass of the person.

refers to the density of the gas where the subscript indicates “Air” or helium, r is the radius of the balloon, sballoon refers to the surface density of the balloon calculated above, and mperson is the mass of the person.

Air density varies with altitude. At sea level it’s 1.225 kilograms per cubic meter, while at 5,000 feet it’s 1.1 kilograms per cubic meter. Since we are mostly interested in stopping near the ground, we will assume a value of 1.2 kilograms per cubic meter. The density of helium at normal pressure is only 1.785*10^-4 grams per cubic centimeter. This would also decrease with altitude, but for our purposes we will treat it as constant.

Now we can solve for r:

A 2.6 meter radius sphere yields a volume of 74 cubic meters, or 2,613 cubic feet. This is how much helium will be needed.

Our final mass will also be:

Now, to determine the initial mass, we need to know how much mass the containers have. 250 cubic feet is on the upper end of standardly sized helium containers. Some searching around suggests that an empty container weighs 108 pounds, or 49 kilograms, and has an internal volume of 43 liters and a diameter roughly 23 centimeters across. The person will need at least 11 of them to fill the balloon. (I hope it’s not a commercial flight, since checking the canisters as luggage at today’s rates would cost roughly $600. Before tax.)

Of crucial importance is how fast the balloon can be inflated. Too fast and the deceleration becomes dangerously high; too slow and our person hits the ground.

The time a container takes to release a percentage of its mass can be approximated by the following equation:

R. B. Bird, W. E. Stewart, and E. N. Lightfoot, Transport Phenomena, New York:John Wiley & Sons, 1960

where

F is the fraction of the gas remaining in the container,

k is the heat capacity ratio (1.667 for helium),

C is the discharge coefficient,

A is the cross-sectional area of the opening,

V is the volume of the container,

is the initial gas density, and

P0 is the initial pressure. This represents an uncontrolled release of gas, but we do want to fill the balloon as quickly as possible.

The discharge coefficient is the ratio of the actual discharge to the theoretical discharge. For this example, we will assume the value is 0.72. If the nozzle is 2 millimeters in diameter, that yields a cross-sectional area of 3.141 square millimeters.

To determine the initial pressure and density, we can treat the helium as an ideal gas and use the ideal gas law:

where V is the volume of the container, n is the number of moles of helium, R is the molar gas constant, and T is the temperature. Assuming a constant temperature of 0 °Celsius, we can work out that the initial number of moles in 250 cubic feet of helium gas under standard pressure and temperature is 315.839. Thus, putting these values into the ideal gas law, we can solve to find that the initial pressure is 16.681 megapascals.

Density is just the mass of the helium divided by the volume of the container:

We will assume that the person wants to empty 90% of each container before switching to a new one. The process of diminishing returns in emptying a container of gas means you can never really empty it. Placing these numbers into our equation, we find the time to be:

That is obviously too long to wait for a single container to empty. In fact, we can see that as more time progresses, the outflow of the container gets slower and slower:

We can speed this up by making a bigger hole. We might also use some apparatus to release the gas from all the containers at once. If we do this and increase the width of the nozzles of each container by a factor of 5 to 10 millimeters, then the time to empty all the containers is only:

So the person falling could in theory fill the balloon in time by rapidly (and dangerously) evacuating the compressed gas containers. Then how fast would the person decelerate?

Well, that depends on the drag created. The equation for drag force is:

where Fd is the drag force, Cd is the drag coefficient,  is the mass density of the surrounding air, u is the speed relative to the air, and A is the cross-sectional area. Cd depends on the shape of the object. Initially the drag coefficient would be quite low, since the assembly would consist of a ring of gas containers and a hurriedly working occupant. Ultimately we can consider the entire system a sphere, with a person tethered to the bottom, once the containers are dropped.

is the mass density of the surrounding air, u is the speed relative to the air, and A is the cross-sectional area. Cd depends on the shape of the object. Initially the drag coefficient would be quite low, since the assembly would consist of a ring of gas containers and a hurriedly working occupant. Ultimately we can consider the entire system a sphere, with a person tethered to the bottom, once the containers are dropped.

So, how long would it take for our jumper to fall without the balloon being inflated? Assuming the jump is at 12,500 feet, which is a common height for parachuting, we need to determine the drag coefficient and the cross-sectional area. We will assume the drag coefficient is comparable to that of a long streamlined body like the individual compressed gas containers, which would be around 0.1.

We can approximate the cross-sectional area by considering the 11 containers arranged in a ring as a circle. With a circumference 11 * 23 centimeters, or 2.53 meters long, the circle has an area of roughly 0.509 square meters. Turning to Wolfram|Alpha, we find that the time to fall would be 29 seconds and that the final velocity would be 250 meters per second, or 570 miles per hour.

So, the person has under 29 seconds to get the balloon filled, and we have worked out that it will take about 16 seconds once the valve on the contraption is turned on. If the balloon is inflated by 7,500 feet, the person would be moving at 170 meters per second. How quickly would the balloon slow the jumper down?

The cross-sectional area of a 2.8 meter radius sphere is 21.2 square meters. Taking our conditions, we can write a function for the acceleration for the drag force as a function of velocity by taking equation 4 and dividing both sides by the initial mass:

A safe landing speed would be something less than 10 miles per hour, or 4.47 meters per second. To find when the person would reach that speed, we need to solve the equation of motion for our system:

The left-hand side of the equation is our usual mass times acceleration. On the right-hand side, we have our drag force plus the force of gravity pulling down and the buoyancy forces pushing up. The last two terms cancel out in our case. Plugging this into NDSolve, we can get a numerical solution to the problem:

This yields a curve with very rapid deceleration that tails off slowly. It would take roughly 3.5 seconds to slow down to a safe speed.

If this acceleration occurred instantly, it would be dangerously high:

1701.84 meters per second squared is over 170 Gs, and is likely instantly fatal.

Luckily the jumper would have already begun decelerating while the balloon was inflated. Let’s try to approximate that.

We will start by going back to our equation for the time it takes to empty a gas container. We can break this into a constant term and a portion based on F and t.

We are then left with the equation:

t==(F[t](1-1.667)/2-1)constant

Rearranging this, we find that the equation for the gas remaining in the containers is:

F[t]==(t/constant+1)^(2/(1-1.667))

But we will need the equation for the gas being released. We will also want to compensate for the fact that the total gas volume (2,750 cubic feet) is greater than the balloon’s volume (2,613 cubic feet).

This gives us an equation for how filled the balloon is.

Next we derive the area in terms of time:

From this we can get the drag force in terms of time and velocity at the initial point of the drop. We will assume that the partially inflated balloon along with the containers has a drag coefficient of 0.2.

We will also need to determine the buoyancy as a function of time. This is just a matter of finding how much air has been displaced by the partially inflated balloon. We take the ratio of the final mass and the initial mass and multiply it by gravity and the percentage of the balloon filled:

Finally, we return to our equation to solve for the velocity starting at the moment of the drop:

So now we can see that the jumper accelerates until the balloon becomes large enough to stop acceleration, which happens after about 10 seconds.

From the above calculations, we find the person has traveled only about 298 meters, or 1,000 feet, and is now moving at 39.8 meters per second, 89 miles per hour. If the containers were dropped right now, the deceleration would be:

This is over 9 Gs, which is still dangerously high. Luckily the ground is still a long way away. If, for example, 8 of the containers were dropped, the deceleration would be:

Or a bit under 2 Gs. Once the speed was stabilized, the person could drop the rest of the containers to reach a safe speed.

So, after 10 seconds from the initial jump, 8 containers are dropped, with 3 remaining.

At the end of another 10 seconds (20 seconds into the jump), the speed has been reduced to under 20 meters per second. Now the person can drop the rest.

The jumper suffers a momentary deceleration of 2.2 Gs, then quickly decreases to a safe velocity.

Putting these stages together, we find a fast drop in speed at the beginning of each stage, but that after 22 seconds, the velocity has been reduced to a safe level.

The total distance fallen is 598 meters, or only 1,962 feet.

Our jumper would now be floating several thousand feet in the air. The descent would gradually slow with drag or until the force of the wind exceeded the inertia and blew the person across the sky. Now the jumper just has to figure out how to get down.

Download this post as a Computable Document Format (CDF) file.

Und mittlerweile zahlen die Lufthanseaten ja auch wieder eine anständige Dividende.

Und mittlerweile zahlen die Lufthanseaten ja auch wieder eine anständige Dividende. Die aktuelle Kursexplosion bei Bitcoin und Ethereum macht Mining zwar wieder etwas interessanter. Aber mehr als ein Hobbyprojekt wird das für mich nie werden, das Geld muss anderswo verdient werden.

Die aktuelle Kursexplosion bei Bitcoin und Ethereum macht Mining zwar wieder etwas interessanter. Aber mehr als ein Hobbyprojekt wird das für mich nie werden, das Geld muss anderswo verdient werden.

Goodbye

Goodbye

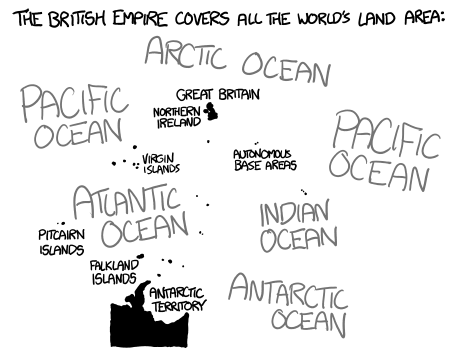

![The walls of Buckingham Palace are lined with [in case of emergency, break glass] boxes. Inside each one is a cup of tea.](http://what-if.xkcd.com/imgs/a/48/empire_eclipse.png)