Shared posts

The New Hartsyard 2.0 Is Here & It's Nothing Like It Used To Be!

Fergus NoodleI dunno it used to be bad and this food also looks bad

Brushtail possum attacked by ravens

Chart of the Day: Does Your State Allow Police to Have Sex With People They Arrest?

In 35 states, it’s legal for cops to detain and have sex with someone in their custody. Is your state one of them?

Yesterday, Buzzfeed News published an investigative piece about Anna Chambers, a New York teenager pressing rape charges against Eddie Martins and Richard Hall, two members of the New York Police Department. Last fall, Anna was picked up by the two cops who told her two male friends to leave, handcuffed her, and led her into their van. According to Anna’s lawyer, the policemen ordered her to undress — and when they didn’t find drugs, they raped her.

It’s a stunning story of state violence — of cops using their guns, their badges, and their impunity to attack vulnerable women. Anna’s far from alone: sexual assault is the second most commonly reported form of police misconduct and brutality (after excessive force). A 2015 investigation found that over 1,000 officers across America have lost their badges because of sexual assault — and their report noted that number is “unquestionably an undercount” because many states, including New York, don’t keep state records of decertified cops. Further, sexual violence and police violence are highly underreported — meaning these number represent a mere fraction of the actual prevalence of police-perpetrated sexual violence.

You’d think this would have been an open-and-shut case. Anna’s forensic exam (commonly known as a ‘rape kit’) matched Martins’ and Hall’s DNA, and a security camera shows the detectives leaving her on the side of a street a quarter-mile from a police station. Anna says she repeatedly told the detectives no; the detectives say it was consensual.

To be clear, I completely believe Anna. But even if she hadn’t verbally said no, these two cops picked up a teenage girl, detained her in a police van, and then had sex with her while she was in their custody. They exploited the immense difference in power between an armed police officer and a civilian locked in the back of their car — a different in power that could easily coerce someone into saying yes to sexual contact they absolutely don’t want.

A person in police custody can’t give genuine consent, free from coercion. Not to armed police officers who have the power to arrest them if they say no.

But here’s the kicker: Buzzfeed’s investigation found that in 35 states, it’s legal for police to have sex with people in their custody.

The criminal legal system makes the people caught up in it vulnerable to sexual violence. That’s why, under New York state law, it’s illegal for prison guards to have sex with incarcerated people or for parole officers to have sex with the parolees they oversee. If you say no to your parole officer, they might send you back to prison. If you say no to a prison guard, they might put you in solitary confinement. Police officers are no different — if you’re in the back of a cop’s car, you are at their mercy. The police shouldn’t be able to exploit that power differential to force themselves on vulnerable women yet, in most states in America, they can do so and face no legal consequences.

Thanks to the #MeToo movement, long overdue legislation to fight harassment and violence is finally gaining steam. Still, even now, few headlines and legislators are tackling the problem of police sexual violence, perhaps because its victims are mostly poor women, especially women of color. It’s about time for our righteous rage to tackle the Eddie Martins, Richard Halls, and Daniel Holtzclaws of the world, who just like Harvey Weinstein, grossly abuse their power to abuse women.

Outraged by Anna’s story, New York City Council Member Mark Treyger has proposed a bill that would prohibit police officers from having sex with anyone in their custody. Bills like his should be introduced — and passed — in every state on this map.

The Inaugural United Airlines Sydney To Houston Flight!

Fergus NoodleDid she have an affair with Craig?

hot summers day and water.

Fergus NoodleThis was so long ago! Before Clemmie's ears exploded :(

Angus said enough is enough I'm sitting on the lounge where it's dry.

Clemmy just wants to give him a cuddle.

Well time to dry off now, bye for now see you next time.

Hello Yellow Deli, Katoomba

Fergus NoodleYeah, cool Lorraine - http://www.smh.com.au/nsw/secrets-of-the-family-20131208-2z00t.html

Serena Williams had to push for treatment for life-threatening postnatal complication

The headline of one of ProPublica’s recent articles in an excellent and devastating series on maternal health in the United States reads: “Nothing Protects Black Women From Dying in Pregnancy and Childbirth.” The subtitle continued: “Not education. Not income. Not even being an expert on racial disparities in health care.” You can apparently add to that: Not even being the greatest athlete in the world.

A cover story in Vogue yesterday recounted Serena Williams’ harrowing childbirth experience, in which she had to insist health care providers perform a CT scan to check for a pulmonary embolism when she suddenly began to have trouble breathing:

Though she had an enviably easy pregnancy, what followed was the greatest medical ordeal of a life that has been punctuated by them. Olympia was born by emergency C-section after her heart rate dove dangerously low during contractions. The surgery went off without a hitch; Alexis cut the cord, and the wailing newborn fell silent the moment she was laid on her mother’s chest. “That was an amazing feeling,” Serena remembers. “And then everything went bad.”

The next day, while recovering in the hospital, Serena suddenly felt short of breath. Because of her history of blood clots, and because she was off her daily anticoagulant regimen due to the recent surgery, she immediately assumed she was having another pulmonary embolism. (Serena lives in fear of blood clots.) She walked out of the hospital room so her mother wouldn’t worry and told the nearest nurse, between gasps, that she needed a CT scan with contrast and IV heparin (a blood thinner) right away. The nurse thought her pain medicine might be making her confused. But Serena insisted, and soon enough a doctor was performing an ultrasound of her legs. “I was like, a Doppler? I told you, I need a CT scan and a heparin drip,” she remembers telling the team. The ultrasound revealed nothing, so they sent her for the CT, and sure enough, several small blood clots had settled in her lungs. Minutes later she was on the drip. “I was like, listen to Dr. Williams!”

Williams’ medical saga continued, leaving her bedridden for six weeks, and ultimately turned out ok. But the fact that Serena Williams, who’d nearly died of a pulmonary embolism in 2001, who had just had a C-section which increases the risk of blood clots, and who is Serena goddamn Williams, who is rich and famous and is rich and famous specifically for the spectacular feats of her body, had to identify her symptoms herself and demand the screening needed for a potentially deadly complication is an incredible illustration of the deep sexism and racism that black women face in the medical system.

The ProPublica series, by Nina Martin and NPR’s Renee Montagne, has been exploring the myriad factors that contribute to the US’ shameful record on maternal health. The US has the highest rate of maternal mortality in the developed world, and there is an enormous racial disparity: black women are three to four times more likely to die than white women. And while pre-existing health conditions and lack of access to medical care are part of the problem, they aren’t the whole story. As Dr Elizabeth Howell tells the New York Times today, “Everyone always wants to say that it’s just about access to care and it’s just about insurance, but that alone doesn’t explain it.”

It is also about health care providers who, in their focus on the baby after a delivery, too often overlook serious complications in the mother and who too often just don’t listen to women’s—especially women of color’s—testimony of their symptoms. Not even a world-famous athlete’s.

A few days away for the beer fairy and a bit of a clean up.

A spider or two found at my sons house

This was my fathers house it looks so different now there once was a huge tree out the front.

Pictures of boats

pictures of boats with beer fairy on the left

The swimming pool, everything looks very green they had had a bit of rain.

Banana benders are not always very polite but we are not always well behaved but I do love this little reminder telling everyone what is expected of them.

A bit of advertising on the golf course

a game of golf was on the cards.

I have reclaimed my front verander my son has taken all his bits and pieces left over from his house he sold in Sydney a few years back.

This is a great place for a cup of tea or coffee in summer as you catch the breezes and you can watch the world go by.

Most of the plants are fake as I once had a huge planter out here with lots of plants but the water never really got away.

and as you can see it caused a fair bit of damage I would like to get it repaired and repointed but these days they usually tear down and rebuild.

The front yard needs a lot of work but it is just to hot to do much at the moment.

but I did cut all my creeping fig down a lot of it had died this bit requires a ladder to get it down I was hoping it would just fall down I'm not fond of ladders.

A bit wild all round but when the weather cools I will tackle it we are expecting 42c today so most likely all day inside today.

Cooks River vandalised

Fergus NoodleV shit

Cherry Ripe Snow Beanie Christmas Cake!

Fergus NoodleThis is a v strange post

Ginger Cooler

Why is Twitter Protecting Violent Islamophobes While Banning Everyone Else?

Conservative journalist Laura Loomer went on a hateful, Islamophobic rampage on Twitter on Wednesday, posting photos and video she took of Muslim women in New York City. Unsurprisingly, Twitter has done nothing about it.

Labeling herself a proud Islamophobe, Loomer called Muslims “fucking savages,” Islam a “cancer” and alleged that Muslims were “all the same” and should “never be let into the civilized world.” Loomer also claimed that she was late to work at a New York Police Department press conference because it took her 30 minutes to find an Uber or a Lyft not driven by a Muslim driver, calling it “insanity” and demanding a version of a ridesharing app that would exclude “Islamic immigration drivers.” Her actions were so toxic that even a company resistant to any sort of radical politics by any standard – Uber – was forced to ban her for her racist vitriol. (Lyft has since banned her as well).

There’s no need to explain how blatantly disgusting Loomer’s words are, and I hesitate to amplify her voice in any way using this platform. But the key question here is this: why is Twitter, the same company that just in this month has actively suppressed leftist voices (one of our editors included) in the name of security, allowing Loomer to continue using their website as a platform to spew unfiltered, hateful, racist nonsense? It was just a few days ago that Twitter temporarily suspended the account of leftist comedian Krang T. Nelson for what was clearly a joking tweet about Antifa “supersoldiers.” Twitter also caused a public uproar when, more seriously, they banned actress Rose McGowan for speaking out against sexual assault, alleging later that the suspension was because she had tweeted a ‘private phone number.’

Loomer, in contrast, has actively threatened the Muslim community with her tweets: wanting to deprive them of their citizenship and their livelihood – clear steps, regardless of her intent, upwards on the pyramid of hate leading up to eventual genocide. This is in a climate where the Muslim community in the United States already faces threats ranging anywhere from surveillance to vigilante and state violence. Loomer is using a public platform with which she speaks to over 100,000 followers to make comments that have a high likelihood of inciting physical violence. In addition – far more dangerous than a phone number – Loomer has tweeted pictures and videos (which are still up!) without consent, of course, of Muslim Americans in hijabs walking out in New York City, making them or other women in hijabs in New York clear targets of violence from any fanatical right-wing followers she might be radicalizing.

Twitter’s selective use of the ban tool in order to suppress activists and prop up white supremacists is well documented, and it is clear the platform has a harassment problem. But this is just one more obvious instance where the company has shown what is laziness at best and active complicity in white supremacy at worst in how they chose to moderate their platform. After the McGowan controversy went down, CEO Jack Dorsey tweeted what seems – then and now – a hollow apology and a claim that the company is aiming to counteract the silencing of marginalized voices on their platform and strengthening their policy against abusers and bullies. Laura Loomer’s tweets and verified account – standing loud and proud on the internet – are a marker of proof that Dorsey and Twitter are full, apologies for the language, of horseshit.

The last 2 weeks.

Wilbur on the swing, he is such a big boy now.

On the way home from the park I took odd shots of the neighbourhood.

Some tall pine trees.

Love this balcony

Schools have water tanks now

This tree is growing next to my house I had no idea it could get so big.

Sign of the times, we often took a shortcut through our school to the bus stop not allowed any more.

Some are very private

This one is for sale interesting old house but on a very busy road.

Love the door and window screens.

The mural has seen better days could do with a freshen up.

The smell is lovely.

some houses are tiny

street flowers

Can you see the mischief

On Wednesday last week we went to the zoo

We saw the big male elephant

The babies were impressed

Well a bit anyway

the big turtles

very slow but very impressive

A lazy Hippo.

there was a baby one in the water diving but I must of missed him.

A baby elephant

with his mum

family together

and of course these fellows

Well that's bye from us.

Women Don’t Bleed Blue (Even Yalies and Members of the Social Register)

Several years ago, Ann Bartow blogged here about U.S. advertisers’ first use of a “red dot” to illustrate blood on a menstrual hygiene pad.

According to this article in the Scottish Daily Mail, an ad for Bodyform in the U.K. is drawing controversy for using red liquid — instead of the customary blue — to illustrate the pad’s absorbency. The ad uses the hashtag #bloodnormal and features a man buying menstrual hygiene products, a woman floating on a white pad-shaped mattress in a swimming pool, and woman in a shower with blood flowing down her legs.

The ad has been called “disgusting” by one person but “groundbreaking” by none other that Cosmo magazine (itself at the forefront of the menstrual equity movement, joining with Jennifer Weiss-Wolf to promote an on-line petition against the tampon tax).

I’m all for #bloodnormal, but in, say, a diaper commercial, I wouldn’t want to see yellow or brown stand-ins for a baby’s digestive output. Hypocritical? Probably.

U.S. Men’s Soccer Proves That Mediocre Men Will Still Earn More Than Successful Women

Last week, the United States’ men’s soccer team lost 2-1 in a World Cup Qualifier to Trinidad and Tobago, the only team below them in the group standings, sending them crashing out of the Men’s World Cup for the first time since 1986 in what some are calling “the worst loss in the history of U.S. Men’s Soccer.” It seems a good a time as any to remember that it was only in April this year that the U.S. women’s too, lost an important fight: the battle to gain equal pay with the men’s team. And it also seems a good time to remember that while the U.S. men comically crashed out of the World Cup, the women won it in 2015.

The deeper one dives, the more embarrassing the record is. The U.S. women’s team’s record in World Cups the past twenty years includes two victories, one second-place finish, and three third-place finishes. The men’s involves one non-qualification, two exits at the Group Stages, two at the Round of 16, and one high of a quarterfinal finish. The women have lost only two Olympic gold medals between 1996 and 2016. The (under-23, but nonetheless) men did not qualify three times in the same period.

The history of U.S. men’s soccer is far from illustrious in general, especially on the international stage. As FiveThirtyEight points out, “In the 1998 World Cup and the 2006 World Cup — the last two on European soil — it combined for one tie and five losses. In 2015, the team was stunned at home in the Gold Cup semifinal by Jamaica, which at the time was ranked 76th in the world by FIFA.” Meanwhile, the women’s team has been characterized by roaring successes, entertaining play, stimulating victories, and renewed public interest in soccer. They also now bring in more game revenue than men, bringing in $23 million last year, and turned over 3 times as much profit as the men in 2016. U.S. Soccer predicts the same will happen in 2017 for the women — while the men are expected to turn over a loss of $1 million.

In March 2016, five of the U.S. women’s team players filed a federal complaint with the Equal Employment Opportunity Commission alleging that U.S. soccer acted discriminatorily in paying its female players less than those on the men’s team. The complaint pointed out some startling figures: women, if they won and including their win bonus, would earn $4,950 per game; the men would earn $5,000 just for showing up (and a whopping $8,166 if they won, rare as that might be). If women won all their games in a year, they’d earn $99,000 — still less than the men’s salary for just showing up and losing every game, at $100,000. And that’s not counting the litany of smaller discriminatory practices: coach flights vs. business class; dangerous artificial turf vs. real fields; and lower per diems and pay for sponsor appearances.

The fight did end in some form of victory in April this year: women’s players got pay raises of over 30%, better bonuses, higher per diems, and other financial benefits. And yet U.S. soccer couldn’t take the final leap and pay a multiple World Cup-winning, tremendously victorious side that is more financially profitable the same amount of money as a mediocre side that crashes out of a World Cup and expects to net a revenue loss.

Last week’s World Cup qualifier loss was a sobering reminder to some soccer fans about systemic problems with U.S. men’s soccer. But to many of us, it is also a sobering reminder to women: you can be twice or thrice as good as men, but you still cannot expect to be treated or paid on par with them.

Header image via.

In Which We Autistically Begin Our Career In Surgery

Showing Appreciation

by ELEANOR MORROW

The Good Doctor

creator David Shore

ABC

Shaun Murphy (Freddie Highmore) is a functioning autistic surgical student. In the first episode of The Good Doctor, he flies from Wyoming to San Jose, California to begin his first residency. Both places are much the same to him, and really to us, since we have never been to San Jose or Cheyenne, and there is nothing in The Good Doctor to recommend either.

Shaun Murphy (Freddie Highmore) is a functioning autistic surgical student. In the first episode of The Good Doctor, he flies from Wyoming to San Jose, California to begin his first residency. Both places are much the same to him, and really to us, since we have never been to San Jose or Cheyenne, and there is nothing in The Good Doctor to recommend either.

When he lands at the San Jose airport, he witnesses a severe accident. A plane of glass falls on an African-American boy. Shards lodge in the boy's abdomen and enter his bloodstream; his neck is also slashed. A well-meaning doctor tries to help, but Shaun can see that he is doing it wrong, because autistic people have superpowers much like Superman's x-ray vision. Shaun immediately recalls information from medical textbooks he has pored over. He creates a makeshift valve to allow the boy to keep breathing, but not after stealing a knife from a gaggle of TSA agents.

After they see that their son has been saved by this weird white man, the parents of the boy give him a soft hug. Shaun is neither excited or disturbed by their outpouring of emotion. He does not seem to understand it at all, an unlikely reaction for a functioning autistic. Then again, if he bristled at their touch, how sympathetic would he be in the scenes that follow?

Shaun's benefactor is Aaron Glassman (Richard Schiff). He is president of the hospital to which the African-American boy is dispatched. Shaun follows, begging the doctors attending the case to give the child an echocardiogram. They won't do it, probably because they are racist. Or maybe not racist, since most of the residents at this hospital are individuals of color, but racist against autistic people.

In many other countries, individuals with developmental disabilities are being eliminated before they are even born. I would like to think that in America, we value genetic diversity, but The Good Doctor puts the lie to this entire concept, since Shaun's supervising Mexican-American surgeon Dr. Melendez (Nicholas Gonzalez) tells him, on his first day, that he will only be doing suction.

While it is certainly nice to see a hospital full of doctors from a diverse variety of backgrounds, The Good Doctor sort of writes itself into a political hole here. It is not really appropriate or convincing to identify these various individuals from disparate life experiences as all united in their intolerance of a white man. I say, "not appropriate," because it implies that coming from a particular place gives you no particular understanding of what it means to be an outsider in every context. I think that's a lie.

As it happens, the actors who play Shaun's immediate superiors on The Good Doctor have a very specific background. Hill Harper, who portrays the head of surgery at the hospital, attended Harvard Law School. Gonzalez, who stars as the arrogant surgeon meant to be Shaun's supervisor, spent time at Oxford. I do not believe any of these people in real life would be intolerant of someone with autism, and it feels somewhat wrong to force them into positions where they have to pretend this.

Shaun's character promulgates this contradiction in a scene with another resident, Claire Browne (Antonia Thomas). (Thomas is an English actress, borne of a Jamaican mother and a British father.) He says to her in the hospital's cafeteria, "The first time I met you, you were rude to me. The next time, you were nice to me. Which time were you pretending?"

In flashbacks we see that young Shaun (Graham Verchere) was essentially raised by his brother Steve (Dylan Kingwell). They live in a school bus for some reason, which seems slightly implausible, but not for Shaun, who asks if they can get a television. Steve says that they can't because they live in a school bus. Steve might be annoyed sometimes by his brother's autism, but in general he is remarkably good-natured about it.

In this inverted world, certain people are surgeons. Maybe it's great that they are, maybe some of them shouldn't be. It is not up to us to judge, whether we are white or Mexican-American or African-American, since we can never truly know the subjectivity of another person. We must only show our appreciation, our happiness that another person, who exists at the behest of something larger than ourselves, lurks behind the mask of the everyday. In this regular-ish place, superpowers are always secret.

Or maybe the only superpower that Freddie Highmore's character actually has is that he is white, and the rest is just a distracting backstory.

Eleanor Morrow is the senior contributor to This Recording.

Fresh Mozzarella Salad with Tomatoes, Cucumber and Pomegranate Molasses

Fergus Noodlemake me this ty

Canadian Man Gets 9 Months Detention for Serial Swattings, Bomb Threats

A 19-year-old Canadian man was found guilty of making almost three dozen fraudulent calls to emergency services across North America in 2013 and 2014. The false alarms, two of which targeted this author — involved phoning in phony bomb threats and multiple attempts at “swatting” — a dangerous hoax in which the perpetrator spoofs a call about a hostage situation or other violent crime in progress in the hopes of tricking police into responding at a particular address with deadly force.

Curtis Gervais of Ottawa was 16 when he began his swatting spree, which prompted police departments across the United States and Canada to respond to fake bomb threats and active shooter reports at a number of schools and residences.

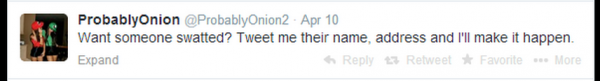

Gervais, who taunted swatting targets using the Twitter accounts “ProbablyOnion” and “ProbablyOnion2,” got such a high off of his escapades that he hung out a for-hire shingle on Twitter, offering to swat anyone with the following tweet:

Several Twitter users apparently took him up on that offer. On March 9, 2014, @ProbablyOnion started sending me rude and annoying messages on Twitter. A month later (and several weeks after blocking him on Twitter), I received a phone call from the local police department. It was early in the morning on Apr. 10, and the cops wanted to know if everything was okay at our address.

Since this was not the first time someone had called in a fake hostage situation at my home, the call I received came from the police department’s non-emergency number, and they were unsurprised when I told them that the Krebs manor and all of its inhabitants were just fine.

Minutes after my local police department received that fake notification, @ProbablyOnion was bragging on Twitter about swatting me, including me on his public messages: “You have 5 hostages? And you will kill 1 hostage every 6 times and the police have 25 minutes to get you $100k in clear plastic.” Another message read: “Good morning! Just dispatched a swat team to your house, they didn’t even call you this time, hahaha.”

I told this user privately that targeting an investigative reporter maybe wasn’t the brightest idea, and that he was likely to wind up in jail soon. On May 7, @ProbablyOnion tried to get the swat team to visit my home again, and once again without success. “How’s your door?” he tweeted. I replied: “Door’s fine, Curtis. But I’m guessing yours won’t be soon. Nice opsec!”

I was referring to a document that had just been leaked on Pastebin, which identified @ProbablyOnion as a 19-year-old Curtis Gervais from Ontario. @ProbablyOnion laughed it off but didn’t deny the accuracy of the information, except to tweet that the document got his age wrong.

A day later, @ProbablyOnion would post his final tweet before being arrested: “Still awaiting for the horsies to bash down my door,” a taunting reference to the Royal Canadian Mounted Police (RCMP).

A Sept. 14, 2017 article in the Ottawa Citizen doesn’t name Gervais because it is against the law in Canada to name individuals charged with or convicted of crimes committed while they are a minor. But the story quite clearly refers to Gervais, who reportedly is now married and expecting a child.

The Citizen says the teenager was arrested by Ottawa police after the U.S. FBI traced his Internet address to his parents’ home. The story notes that “the hacker” and his family have maintained his innocence throughout the trial, and that they plan to appeal the verdict. Gervais’ attorneys reportedly claimed the youth was framed by the hacker collective Anonymous, but the judge in the case was unconvinced.

Apparently, Ontario Court Justice Mitch Hoffman handed down a lenient sentence in part because of more than 900 hours of volunteer service the accused had performed in recent years. From the story:

Hoffman said that troublesome 16-year-old was hard to reconcile with the 19-year-old, recently married and soon-to-be father who stood in court before him, accompanied in court Thursday by his wife, father and mother.

“He has a bright future ahead of him if he uses his high level of computer skills and high intellect in a pro-social way,” Hoffman said. “If he does not, he has a penitentiary cell waiting for him if he uses his skills to criminal ends.”

According to the article, the teen will serve six months of his nine-month sentence at a youth group home and three months at home “under strict restrictions, including the forfeiture of a home computer used to carry out the cyber pranks.” He also is barred from using Twitter or Skype during his 18-month probation period.

Most people involved in swatting and making bomb threats are young males under the age of 18 — the age when kids seem to have little appreciation for or care about the seriousness of their actions. According to the FBI, each swatting incident costs emergency responders approximately $10,000. Each hoax also unnecessarily endangers the lives of the responders and the public.

In February 2017, another 19-year-old — a man from Long Beach, Calif. named Eric “Cosmo the God” Taylor — was sentenced to three year’s probation for his role in swatting my home in Northern Virginia in 2013. Taylor was among several men involved in making a false report to my local police department at the time about a supposed hostage situation at our house. In response, a heavily-armed police force surrounded my home and put me in handcuffs at gunpoint before the police realized it was all a dangerous hoax.

Sweet Pockets! Strawberry & Rhubarb Pop Tarts

Fergus NoodleShe's changed the blog to Lorraine Elliot Not Quite Nigella

Crispy Cauliflower with Tahini Yoghurt Sauce

The Suite Life at Four Seasons, Sydney

Fergus NoodleThis was her husband's birthday thing but she didn't pay for it

Research Finds Obesity is in the Eye of the Beholder

In an era of body positivity, more people are noting the way American culture stigmatizes obesity and discriminates by weight. One challenge for studying this inequality is that a common measure for obesity—Body Mass Index (BMI), a ratio of height to weight—has been criticized for ignoring important variation in healthy bodies. Plus, the basis for weight discrimination is what other people see as “too fat,” and that’s a standard with a lot of variation.

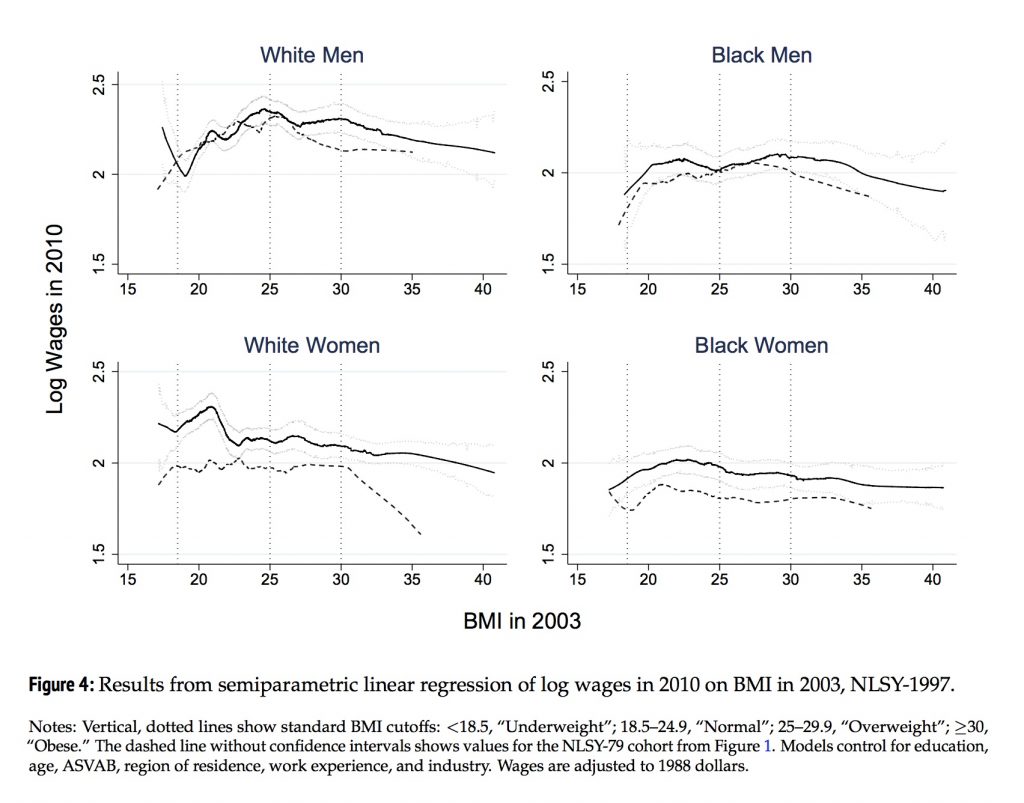

Recent research in Sociological Science from Vida Maralani and Douglas McKee gives us a picture of how the relationship between obesity and inequality changes with social context. Using data from the National Longitudinal Surveys of Youth (NLSY), Maralani and McKee measure BMI in two cohorts, one in 1981 and one in 2003. They then look at social outcomes seven years later, including wages, the probability of a person being married, and total family income.

The figure below shows their findings for BMI and 2010 wages for each group in the study. The dotted lines show the same relationships from 1988 for comparison.

For White and Black men, wages actually go up as their BMI increases from the “Underweight” to “Normal” ranges, then levels off and slowly decline as they cross into the “Obese” range. This pattern is fairly similar to 1988, but check out the “White Women” graph in the lower left quadrant. In 1988, the authors find a sharp “obesity penalty” in which women over a BMI of 30 reported a steady decline in wages. By 2010, this has largely leveled off, but wage inequality didn’t go away. Instead, that spike near the beginning of the graph suggests people perceived as skinny started earning more. The authors write:

The results suggest that perceptions of body size may have changed across cohorts differently by race and gender in ways that are consistent with a normalizing of corpulence for black men and women, a reinforcement of thin beauty ideals for white women, and a status quo of a midrange body size that is neither too thin nor too large for white men (pgs. 305-306).

This research brings back an important lesson about what sociologists mean when they say something is “socially constructed”—patterns in inequality can change and adapt over time as people change the way they interpret the world around them.

Evan Stewart is a Ph.D. candidate in sociology at the University of Minnesota. You can follow him on Twitter.(View original at https://thesocietypages.org/socimages)

Liisa, 26

“Today my clothing was inspired by my stairway with light orange and light green walls. Actually this color mixture reminds me of puke. I am an active user of colors and I don't feel myself cozy in black. My forever sources of inspiration: monochromatism, retrofuturism, sex and fairytales.”

25 July 2017, Mannerheimintie

Police Officer Comforts White Woman by Saying “We Only Kill Black People”

Dashcam footage released last week shows a Georgia police officer telling a passenger during a traffic stop that cops “only shoot black people.” The video isn’t just a bad joke caught on camera. It reveals layers of historical and present police violence against black people – often in the name of making white women feel safe.

The dashcam footage, which surfaced last week, shows Lt. Greg Abbott standing outside of a vehicle during a DUI traffic stop in July 2016. After the passenger – a white woman – expresses fear that she would be shot for moving her hands during the stop, Abbott responds: “But you’re not black. Remember, we only shoot black people. Yeah, we only kill black people, right?” Lt. Abbott retired last Thursday after backlash in response to the video. His attorney released a troubling statement, saying: “In context, his comments were clearly aimed at attempting to gain compliance by using the passenger’s own statements and reasoning to avoid making an arrest.”

It’s sad to admit that Lt. Abbott’s comments do point to a cold, hard truth: law enforcement disproportionately murders people of color. Just last year, black people were over twice as likely to be murdered by police compared to white people; indigenous folks were nearly four times as likely to be killed. This state murder isn’t something unique to our day and age. As Claudia Rankine writes, be it “Dying in ship hulls [...] gunned down by police, or warehoused in prisons,” dead black people are “part of normal life here.”

The state murder of black people is indeed normalized in America – and in case you missed it, this police violence is a feminist issue. Whether or not Lt. Abbott was serious or joking in his comments, they are unacceptable; and they reveal the continued pattern of the state dehumanizing black people — here by treating black lives as punchlines — as a means of comforting white women. As police officers feel emboldened to joke about the black people they kill, how are black people — women, femmes, trans and non-binary folk, men — supposed to navigate our safety and livelihood? And what will white women, even those who like this passenger are seemingly aware of police violence, do to dismantle the police state that will do anything to protect their mutual whiteness?