Howdy, howdy, folks.

For many years (ten now, about which, more soon) McMansion Hell has featured many prominent and diverse atrocities from all over these great United States and sometimes beyond them. However, most of these posts have consisted of houses built during the McMansion Era proper – from the 80s up through around the early 2010s.

This is for a number of reasons. First of all: I like these houses because they are insane. Second of all, they are indeed quite different from one another – they represent the owner’s idiosyncratic if poorly rendered desires and fantasies. They are heavily psychologically loaded buildings. One family dreams endlessly of Tuscany, another wants to recreate the mall. All interiorize previously exterior forms of consumption.

These houses were also very expensive to build compared to their contemporary iterations: all real, solid wood cabinetry and trim, wrought iron railings, marble floors, elaborate murals - none of this is cheap. This is not to say that I’m nostalgic for the classical McMansion (though many are) only that it, like, most other facets of architectural and everyday life, have become progressively cheaper and more bland.

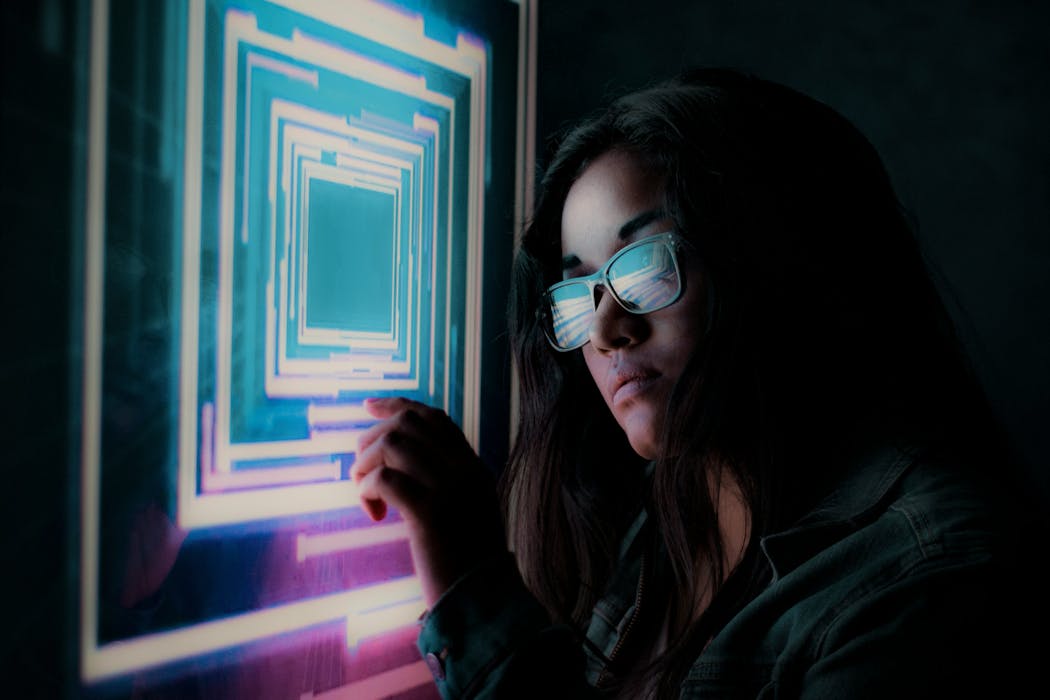

The McMansion never truly goes away. It merely changes shape over time. One of the shapes it currently takes is a particularly loathsome imitation of contemporary high architecture (specifically the kind of houses architects love to build for celebrities in California) executed in the most wretchedly parsimonious manner possible. It feels cheap to use the word ‘slop’ but their indiscriminate nature - the way they have no regard for why or how the things they imitate even work - allows it. Of all the building forms that could be generated with AI, this is the most likely. At any rate, behold:

Yes this is a real house. Yes you can buy it for $6 million in, yet again, Barrington, IL. It has 5 bedrooms and 5.5 bathrooms totaling 11,600 square feet. But most importantly, it looks like dogshit, and that’s with ten layers of Photoshop have been used to gussy it up which, by the way, also makes it appear entirely not of this world. Were it not for the photos of the empty interiors, I myself would have trouble trusting my own eyes. Part of the reason it looks so unreal is because the design itself is absurd, as though someone created four equally ugly vessels and threw them up one by one.

In 2017, in a now-deleted essay for Curbed (RIP - they destroyed the archive) I called these types of houses McModerns, simply because they were McMansions dressed up in modernist garb, which they wore no differently than they would Neo-Tudor or Mediterranean (broadly construed.) These houses don’t warrant a new neologism, but they do feel like a degraded or perhaps even gonzo version of even that old concept. Slop works fine too, especially because half of what’s in these images isn’t real.

Much fascinates me about these houses, however one of the most unique elements vis a vis the last 30 years of building is how overtly and almost hostilely masculine they are. Anything that can be construed as feminized - color, softness, ornament - has been ruthlessly purged. They also rip off tech industry minimalism which only ads to their bro-ey nature. While previous iterations of McModernism (think new builds in Colorado with fake wood exteriors) scream dads with IPAs, these houses scream Reddit to me. They are Elon Musk-adjacent in sentiment.

By the way, this is what that room looks like without the fake furniture. It’s basically a sunroom.

Whole Foods would like to call in a robbery.

Because these houses are designed by men, for men, no one involved has learned how a kitchen works. Many are calling this setup the “grindset tiktok video kitchen.” This is the kitchen you see in those day in the life of an AI startup founder videos your algorithm forces you to watch against your will.

Virtual staging is actual literal slop. In fact, one can say that it was an early harbinger of the ontological crisis we now face, one of the first instances where one is forced against one’s will to question reality, what one sees with one’s own eyes. Beyond that, I think virtual staging is literally a form of lying. You can use it to make a space look bigger or smaller than it is. In this – lying to impress – it also has a lot in common with AI. This dining room has nothing to do with the world I’m living in. These chairs are not my problem.

It’s actually AMAZING how much of what’s in this house, beyond the furniture, is fake. Every single material is fake. The stone is aluminum paneling. The plants are plastic. The concrete is printed on some kind of surface (as evidenced through its repetitive pattern), though it’s hard to say from just pictures. I don’t even trust the floors!!

Ok if you haven’t read Kelly Pendergrast’s amazing essay “Merchandizing the Void” about how houses are all like stores now, HERE IS THE LINK. Some ideas never die, they just evolve, king. Like you.

Please, I’m very cold.

Unfortunately there are no pictures of the rear exterior of this house, so this is where we will have to conclude for today. That being said, these houses and their antecedents are developing a design language all their own that will, in time, be as culturally rich to us as the houses of yore. The problem is they are less visually interesting. They are houses made to scroll in and scroll right by. Expect to see more of them here, but only if they have something, anything to say.