Is Comcast really throttling Netflix?

Short answer: Given the presented evidence so far, we don’t really know. It’s complicated. About the only ones who could really tell us is Comcast, and of course there is little incentive for them to tell you if they are. What we do know is that they’ve got some kind of problem somewhere as people are able to get better performance by using proxy servers. Either Comcast is throttling, not got sufficient bandwidth somewhere, or they’re deliberately not routing traffic efficiently. Or all three.

Long answer: There has been interesting bits and pieces thrown around recently about whether Comcast is or isn’t throttling Netflix and/or access to Amazon AWS in the wake of the FCC losing its method of enforcing Network Neutrality. Some people have been able to show that using a proxy server results in faster and better Netflix performance.

It’s worth pointing out that based on Netflix’s visibility into ISPs, connectivity between Comcast and Netflix has been getting worse pretty steadily for the last year and a half.

I’m going to try and provide a bit of an overview, hopefully without going into too much unnecessary detail, to try to explain why it is actually really quite complicated, and what kinds of evidence we would need to have to be able to confirm whether or not throttling is happening. This is coming in part from my background of working in an ISP both in the Network Operations Centre, and later as a sysadmin (as well as general experiences as a consumer and a sysadmin). Both roles involved a fair bit of work with the networks team and proved to be a fascinating learning experience.

The easiest way to think about internet connectivity is in terms of a tree.

Your ISP will have a lot of bandwidth available at the core represented by the thick trunk, with fairly thick branches feeding off into slightly smaller branches, and even smaller branches off that and so on.

Your home connection is on what is referred to as the “last mile”, the final stretch between your home and the nearest distribution point (and would be the smallest twig). Your home connection is the part of the connection that is most likely to restrict you, as distance and line quality vary and that has a huge impact on maximum theoretical bandwidth. Depending on the scale of the network and how the ISP has chosen to run their service, you might have a few more distribution points before you get to the ISPs central bandwidth (the central trunk of a tree).

At each stage your ISP will be aggregating network traffic together into single links, and at each stage they might not have sufficient bandwidth to cope with all the customers using all their possible bandwidth at the same time. Achieving that would generally be prohibitively expensive. Instead ISPs try to ensure they have just enough (plus suitable headroom) to cope with typical usage, and to provide for enough growth. People’s internet usage is actually reasonably predictable and usually grows at a fairly predictable rate (much like electricity usage.) For example an ISP might sell a 100Mbps service, with 20 houses connected to a 1000Mbps connection. For the most part people aren’t going to actually use 100Mbps, even during peak, so that 1000Mbps connection should be fine, and they’ll be able to predict with reasonable confidence when it’s no longer going to be sufficient. If all the customers did start downloading large content simultaneously, things would get more than a little congested and they’d see packet loss and slow downloads.

This next stage is a little ISP dependent, and I don’t know for sure how Comcast’s has their network arranged, but we can make some fairly safe assumptions. It’s safe to assume that they’ll have regional distribution points in their networks, which will have connectivity off to the internet’s backbone with various transit providers and peering providers as appropriate. There will also likely be connections off to various regional internet exchange points (e.g. Seattle’s SIX: http://en.wikipedia.org/wiki/Seattle_Internet_Exchange). These typically offer cheap and low latency connections to locally hosted resources.

ISPs will have multiple networking providers that provide their connectivity to the internet backbone, this will be likely made up of a mix of transit (go-between connectivity companies) and peering providers (core internet bandwidth). The use of multiple providers allows for resiliency in a network. Almost everything you can access on the internet will be accessible via a number of these providers. It also allows the ISP to manage costs. Internet connectivity can get to be quite expensive at an ISP level. Precise pricing on the contracts with these providers are generally kept secret. What I do know from my past experiences is that the price changes somewhat depending on how much data is provided and consumed, and just as importantly, what the ratio is between those.

Transit providers want to be marketable to companies that might want their services. If you’re uploading content through them, it’s reasonable for them to assume you’re providing value to their other customers, and thus they’ll want to keep you using them. The more the ratio is skewed towards consumption instead of provision, the more expensive the bandwidth becomes.

As you can probably imagine, this results in ISPs trying to do a delicate balancing act, trying to ensure they get the most favourable ratios with all of their connectivity providers. They’ll do this by manipulating route costs in routing tables, effectively changing which provider is favoured by network packets by making one provider seem cheaper (in networking terms) than another. I know of a few ISPs in the UK that continued to run their own usenet/newsgroup servers long after they ceased providing direct value to customers, because it was a good source of upload traffic that could be shifted around to other transit connections without affecting customers (particularly if they kept certain popular binaries groups on it).

This kind of juggling routing around between providers happens all the time with ISPs. With the way network routing and TCP works, you shouldn’t even notice it. ISPs are looking to get a perfect balance of cost, speed and latency. Tier 1 networks (peering companies that provide the core internet connectivity) are likely to be closest to the ultimate destination, but they’re also expensive. Tier 2 networks (transit providers) are likely a few extra hops away, so likely incur extra latency, but are cheaper. Of course your idea of a perfect balance and theirs might be different from theirs. They might be asking “What’s the worst I can get away with?”

Before we can get back to the core question about Comcast, it’s worth taking a moment to look at Netflix. How does Netflix provide content to its customers? Netflix is famous as a user of Amazon Web Services, almost their entire infrastructure is based on Amazon’s cloud services. However they also use what are called CDNs, Content Delivery Networks. They don’t just use one company either, they have multiple providers. When Netflix has transcoded video content for delivery, they upload the videos to CDNs for actual delivery to customers (at one point Netflix had an exclusive deal with Level 3, I’m not sure if that still applies). These CDN companies operate in data-centers right around the world providing tiers of cached content, automatically trying to have content served up as close to customers home network connections as possible.

So where does that bring us with Comcast and Netflix? People are able to show that if they use a proxy their connection to Netflix improves. That tells us one thing at least, that the initial hops in their connectivity to the nearest regional distribution point isn’t congested. The cable company who provided my internet access when I was living in London routinely oversold the local exchange, resulting in terrible service for us until we complained, whilst other friends were fine, even as much as a few streets away. So in this case with Comcast and Netflix we know that connectivity to the end user isn’t congested as clearly they can receive data at a high speed.

So we know the congestion is probably coming from the core. We’re reaching the area where we just don’t have enough information to truly answer the question. If people are able to set up a proxy that sends packets through exactly the same exit point from Comcast’s network as those packets going to the relevant CDN for the content we’re comparing, and we see that they still get better throughput, well the we’re a lot closer to being confident that Netflix is being throttled by Comcast. Unfortunately we don’t. So we can’t say for sure if Comcast is explicitly throttling Netflix traffic or not. We just know that either they are, or something somewhere is wrong in their infrastructure, like a congested transit link, and that Comcast isn’t doing anything to improve things. As you can see from the graph I posted earlier, it’s clear things have been degrading for months.

So why the talk about network neutrality? Part of the problem is, Comcast is also a content provider, and that business revenue is extremely lucrative. With the internet increasingly being the main source for content, via Netflix, iTunes etc. their media business has the potential to suffer. They make terrific amounts of money from advertising alongside their programs. If you’re steaming content from other sources, that lucrative revenue vanishes. Comcast actually has lots of incentive against providing you with good quality service, and only a token excuse for competition to give them any real incentive to upgrade your connection speeds and network. If Network Neutrality is not enforced, Comcast could deliberately choose to cripple access to any competitor they liked, be it ISP, rival content provider or otherwise. With most of the country stuck under what is effectively a government blessed monopoly, it’s not like people could take their business elsewhere.

We shall see what we shall see, I guess.

Hopefully I’ve managed to explain how there are multiple points that congestion could be occurring, and explained why the evidence we’ve seen so far isn’t really a smoking gun about whether or not Comcast is throttling Netflix traffic. If anything is unclear, and / or I’ve done a bad job, feel free to leave a comment.

Newegg offers the Rosewill IDE/SATA to USB Adapter with Protection Case, model no. RCW-608, for $14.99 with free shipping. That's the lowest total price we could find by $12. This device allows you to turn 3.5" hard drives and 5.25" optical drives into USB drives. It comes with a power adapter. Deal ends March 19.

Newegg offers the Rosewill IDE/SATA to USB Adapter with Protection Case, model no. RCW-608, for $14.99 with free shipping. That's the lowest total price we could find by $12. This device allows you to turn 3.5" hard drives and 5.25" optical drives into USB drives. It comes with a power adapter. Deal ends March 19.

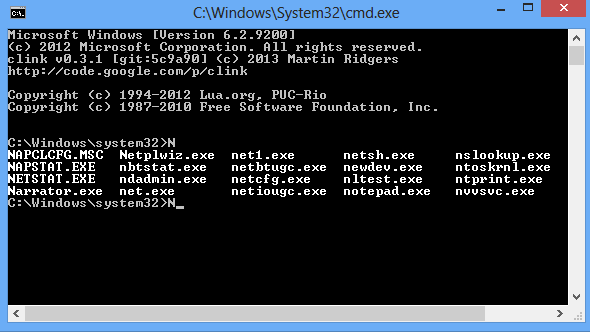

From experts to novices, most PC users benefit from launching a command line session occasionally. This is one area of Windows which hasn’t changed significantly in years, of course, but if you’re tired of its various annoyances there are steps you can take to improve the situation. And

From experts to novices, most PC users benefit from launching a command line session occasionally. This is one area of Windows which hasn’t changed significantly in years, of course, but if you’re tired of its various annoyances there are steps you can take to improve the situation. And

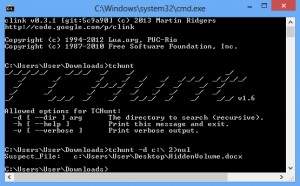

And as usual with command line tools, running tchunt on its own displays more information about the command line switches available.

And as usual with command line tools, running tchunt on its own displays more information about the command line switches available.