Shared posts

A rendőrség futni hagyja a 241-gyel száguldó furgonost

Szdani88xd

Hat autót zúzott le, gyalog menekült

Project Spartan browser benchmarked, found to be pretty fast

Jótékony célra megy a limitált PS4 ára

1994 december 3-án került piacra az első PlayStation, így a Sony nemrég ünnepelhette a PS 20. születésnapját. A neves alkalomra különleges PlayStation 4 modellel készültek amiből limitált kiadás lévén összesen csak 12 300 darab készült az egész világon.

A különleges kiadású PS4 a Vatera és a Sony együttműködésének hála Magyarországon is megvásárolható lesz, az említett aukciós oldalon egy darabot bocsájtanak árverésre. A befolyó összeg jótékony célra megy, a Remény a Leukémiás Gyermekekért Alapítványt támogatják belőle.

A konzol hagyományos kereskedelmi csatornákon keresztül nem lesz megvásárolható, ezért aki szeretne vásárolni belőle, szabadon licitálhat rá az alábbi linken egészen január 30-ig.

NVIDIA Publishes Statement on GeForce GTX 970 Memory Allocation

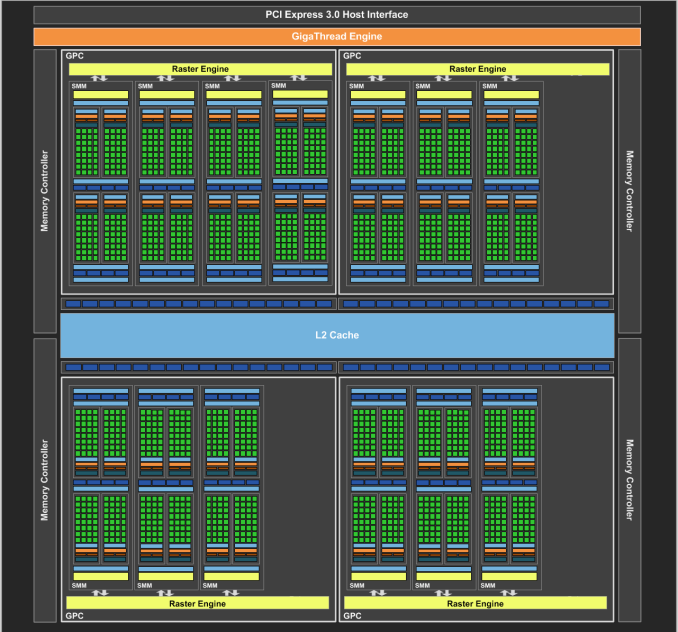

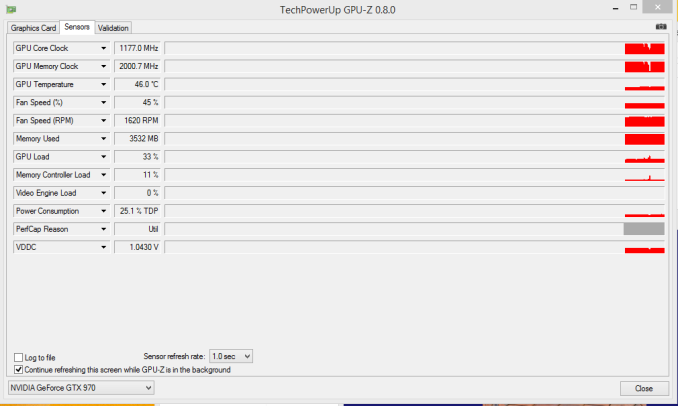

On our forums and elsewhere over the past couple of weeks there has been quite a bit of chatter on the subject of VRAM allocation on the GeForce GTX 970. To quickly summarize a more complex issue, various GTX 970 owners had observed that the GTX 970 was prone to topping out its reported VRAM allocation at 3.5GB rather than 4GB, and that meanwhile the GTX 980 was reaching 4GB allocated in similar circumstances. This unusual outcome was at odds with what we know about the cards and the underlying GM204 GPU, as NVIDIA’s specifications state that the GTX 980 and GTX 970 have identical memory configurations: 4GB of 7GHz GDDR5 on a 256-bit bus, split amongst 4 ROP/memory controller partitions. In other words, there was no known reason that the GTX 970 and GTX 980 should be behaving differently when it comes to memory allocation.

GTX 970 Memory Allocation (Image Courtesy error-id10t of Overclock.net Forums)

Since then there has been some further investigation into the matter using various tools written in CUDA in order to try to systematically confirm this phenomena and to pinpoint what is going on. Those tests seemingly confirm the issue – the GTX 970 has something unusual going on after 3.5GB VRAM allocation – but they have not come any closer in explaining just what is going on.

Finally, more or less the entire technical press has been pushing NVIDIA on the issue, and this morning they have released a statement on the matter, which we are republishing in full:

The GeForce GTX 970 is equipped with 4GB of dedicated graphics memory. However the 970 has a different configuration of SMs than the 980, and fewer crossbar resources to the memory system. To optimally manage memory traffic in this configuration, we segment graphics memory into a 3.5GB section and a 0.5GB section. The GPU has higher priority access to the 3.5GB section. When a game needs less than 3.5GB of video memory per draw command then it will only access the first partition, and 3rd party applications that measure memory usage will report 3.5GB of memory in use on GTX 970, but may report more for GTX 980 if there is more memory used by other commands. When a game requires more than 3.5GB of memory then we use both segments.

We understand there have been some questions about how the GTX 970 will perform when it accesses the 0.5GB memory segment. The best way to test that is to look at game performance. Compare a GTX 980 to a 970 on a game that uses less than 3.5GB. Then turn up the settings so the game needs more than 3.5GB and compare 980 and 970 performance again.

Here’s an example of some performance data:

GeForce GTX 970 Performance Settings GTX980 GTX970 Shadows of Mordor

<3.5GB setting = 2688x1512 Very High

72fps

60fps

>3.5GB setting = 3456x1944

55fps (-24%)

45fps (-25%)

Battlefield 4

<3.5GB setting = 3840x2160 2xMSAA

36fps

30fps

>3.5GB setting = 3840x2160 135% res

19fps (-47%)

15fps (-50%)

Call of Duty: Advanced Warfare

<3.5GB setting = 3840x2160 FSMAA T2x, Supersampling off

82fps

71fps

>3.5GB setting = 3840x2160 FSMAA T2x, Supersampling on

48fps (-41%)

40fps (-44%)

On GTX 980, Shadows of Mordor drops about 24% on GTX 980 and 25% on GTX 970, a 1% difference. On Battlefield 4, the drop is 47% on GTX 980 and 50% on GTX 970, a 3% difference. On CoD: AW, the drop is 41% on GTX 980 and 44% on GTX 970, a 3% difference. As you can see, there is very little change in the performance of the GTX 970 relative to GTX 980 on these games when it is using the 0.5GB segment.

Before going any further, it’s probably best to explain the nature of the message itself before discussing the content. As is almost always the case when issuing blanket technical statements to the wider press, NVIDIA has opted for a simpler, high level message that’s light on technical details in order to make the content of the message accessible to more users. For NVIDIA and their customer base this makes all the sense in the world (and we don’t resent them for it), but it goes without saying that “fewer crossbar resources to the memory system” does not come close to fully explaining the issue at hand, why it’s happening, and how in detail NVIDIA is handling VRAM allocation. Meanwhile for technical users and technical press such as ourselves we would like more information, and while we can’t speak for NVIDIA, rarely is NVIDIA’s first statement their last statement in these matters, so we do not believe this is the last we will hear on the subject.

In any case, NVIDIA’s statement affirms that the GTX 970 does materially differ from the GTX 980. Despite the outward appearance of identical memory subsystems, there is an important difference here that makes a 512MB partition of VRAM less performant or otherwise decoupled from the other 3.5GB.

Being a high level statement, NVIDIA’s focus is on the performance ramifications – mainly, that there generally aren’t any – and while we’re not prepared to affirm or deny NVIDIA’s claims, it’s clear that this only scratches the surface. VRAM allocation is a multi-variable process; drivers, applications, APIs, and OSes all play a part here, and just because VRAM is allocated doesn’t necessarily mean it’s in use, or that it’s being used in a performance-critical situation. Using VRAM for an application-level resource cache and actively loading 4GB of resources per frame are two very different scenarios, for example, and would certainly be impacted differently by NVIDIA’s split memory partitions.

For the moment with so few answers in hand we’re not going to spend too much time trying to guess what it is NVIDIA has done, but from NVIDIA’s statement it’s clear that there’s some additional investigating left to do. If nothing else, what we’ve learned today is that we know less than we thought we did, and that’s never a satisfying answer. To that end we’ll keep digging, and once we have the answers we need we’ll be back with a deeper answer on how the GTX 970’s memory subsystem works and how it influences the performance of the card.

GeForce GTX 960: pénztárcabarát GPU játékosoknak

Szdani88hát ez igen tömör lett

Óriási VGA-hűtőt mutatott be az Arctic

Saints Row Gat out of Hell тест GPU

|

Самостоятельное дополнение к Saints Row 4. Главными героями дополнения станут Кинзи Кенсингтон и Джонни Гет, которые отправятся в ад после того, как туда засосет главу банды. Им нужно будет найти его, а также расправиться с самим сатаной. Противниками героев станут населяющие преисподнюю демоны. |

Jön a <3 alakú telefon Japánban

Íme a Force India 2015-ös festése!

Így rabolták ki a Red Bullt (videó)

Memóriakímélő élsimításon dolgozik az NVIDIA

Szdani88minő meglepő

AMD Carrizo launch in Q2 2015

AMD CEO confirms

AMD released its earnings today and one cool question came up about the upcoming Carrizo mobile APU.

Lisa SU, the new AMD President and CEO, told MKM Partners analyst Ian Ing that Carrizo is coming in Q2 2015.

This is a great news and AMD's Senior VP and outgoing general manager of computing and graphics group John Byrne already shared a few details about his excitement about Carrizo.

There are two Carrizo parts, one for big notebooks and All in Ones called Carrizo and a scaled down version called Carrizo L. We expect that the slower Carrizo-L is first to come but, Lisa was not specific. Carrizo-L is based on Puma+ CPU cores with AMD Radeon R-Series GCN graphics is intended for mainstream configurations with Carrizo targeting the higher performance notebooks.

Usually when a company says that something is coming in Q2 2015 that points to a Computex launch and this Taipei based tradeshow starts on June 2 2015. We strongly believe that the first Carrizo products will showcased at or around this date.

Lisa also pointed out that AMD has "significantly improved performance in battery life in Carrizo." This is definitely good news, as this was one of the main issues with AMD APUs in the notebook space.

Lisa also said that AMD expects Carrizo to be beneficial for embedded and other businesses as well. If only it could have come a bit earlier, so let's hope AMD can get enough significant design wins with Carrizo. AMD has a lot of work to do in order to get its products faster to market, to catch up with Intel on power and performance or simply to come up with innovative devices that will define its future. This is what we think Lisa is there for but in chip design, it simply takes time.

Evolve Beta тест GPU

|

Evolve — находящаяся в разработке многопользовательская компьютерная игра в жанре шутера от первого лица, разрабатываемая американской компанией Turtle Rock Studios. Evolve представляет собой научно-фантастический кооперативный шутер, в котором команда «охотников» противостоит инопланетному монстру. |

Ennyi pénz egy ilyen hülye ötletre?

AMD Free Sync works well in real world

BenQ, Samsung & LG monitors coming soon

We had a chance to see a demo of AMD FreeSync, a new DisplayPort 1.2 standard that gets rid of screen tearing in games.

It uses variable refresh rate technology and syncs the rendering rate of the GPU and the number of frames that you get on the monitor. This prevents this ugly picture tearing that we see on traditional monitors, without G-Sync or FreeSync.

This is AMD's alternative to Nvidia's G-Sync and it works just as well as Nvidia's standard, and of course FreeSync works exclusively on AMD graphics cards. We had a chance to see BenQ, Samsung and LG monitors in action and we saw FreeSync in action at 3840x2160, 2560x1600 and Ultra Wide 2560x1080 resolutions.

The BenQ XL2730Z is a 27-inch 2560x1600 144 Hz monitor with a TN panel. It was running a Tomb Raider demo and worked just fine. You won't see any tearing unless the framerate drops to unplayable rates, under 30FPS or so.

The 28-inch Samsung UE590 monitor delivers 3840x2160 at 60Hz and it was running a windmill demo, where you could turn FreeSync on and off. You can really notice the difference and it is clear that FreeSync delivers a much better experience.

LG's 29UM67 is a 2560x1080 IPS Ultra Wide panel (21:9) that will be attractive for the users who like this form factor. You will be able to put two A4 pages on this monitor and in gaming it will look good in strategies, racing games and many other genres.

AMD promises a dozen or so FreeSync monitors this quarter. Some might start shipping in the next two weeks, with 20 monitors coming through the rest of the year. It is important to mention that unlike Nvidia G-Sync monitors, FreeSync monitors come with HDMI, MHL, DVI and other similar connectors while the first generation of G-Sync comes exclusively with DisplayPort 1.2 and nothing else.

https://www.youtube.com/watch?v=hnBmjN-GVuw

Still FreeSync monitors will end up cheaper but they will work with AMD graphics card only and in case you want the no tear effect in your Geforce games, you will have to use G-Sync or live with it.

Super Mario német agyat kapott

Öt verseny kezdési időpontja is módosul

Csúszik a TSMC 16 nanométeres eljárása

Megjelent a drónos börtöncsempészet

Kínai telefonos olvasói találkozó

Szdani88lol

A világ legkönnyebb 14 colos ultraboookja az LG-től

Szdani88neha azert nem volna rossz egy ilyesmi gép

kijelzőt azt kötsz ra amit akarsz szal az ugyis megoldott, most végzel otthon simán lehúzod a monitort egeret billt és viszed magaddal

Egyre népszerűbbek az ultrabookok a hordozható számítógépek között. Talán az Apple teremtette meg a kategóriát a MacBook Air bemutatásával majd az Intel definiálta a fogalmát a 2011-es Computexen. Az ultrabookok azóta is töretlen népszerűségnek örvend, hiszen könnyen hordozhatóak, manapság már bőven megfelelő teljesítménnyel rendelkeznek, és a mai modellek akár az egész munkanapon át kitartó akkumulátorral rendelkeznek.

Az LG a napokban bemutatta a világ legkönnyebb ultraboookját, ami körülbelül 980 grammos tömegével a 14 colos méretkategóriában mindenképpen győztesnek tekinthető. Összehasonlításképpen a 13 colos MacBook Air legújabb modellje 1,35 kilogramm. Az LG új modellje nem éppen könnyen megjegyezhető nevet kapott, hivatalosan LG 14Z950 az újdonság elnevezése.

A külsőt tekintve a 14 colos gép minőségi fém házat kapott, a fedlap pedig szintén minőségi fém megvilágított LG logóval a közepén. Az egész gép elképesztően vékony, legvastagabb pontján is mindössze 13.4 mm vastag, mégis sikerült a házba egy 10,5 órás üzemidőt biztosító akkumulátort pakolni. A kijelző egy 14 colos Full HD IPS kijelző, a laptopot pedig ötödik generációs Intel Core processzor hajtja, és különlegesség még a Wolfson hangchip, ami a tiszta Hi-Fi minőségű hangzásról gondoskodik.

Az LG bemutatott egy kisebb modellt is a gépből 13,3 colos képernyővel, ami szintén hasonló tudással száll majd versenybe LG 13Z940 néven. Mindkét gép több színben is kapható lesz, hogy mindenki megtalálja ízlésének leginkább megfelelőt. Az LG szerint minden szín kiemeli a gép karcsú és vékony dizájnját. A könnyebb használhatóságát segíti még, a kijelző Reader módja, ami a kiváló minőségű IPS panel segítségével kevésbé fárasztja a szemet ezáltal hosszabb munkavégzésre is alkalmassá teszi a gépet. Az LG elsősorban a dél-koreai illetve dél-amerikai piacokra szánja a gépet, de bizonyára a többi piacon is fel fog bukkanni, igaz várható ára még nem ismert.

Párcserés szex az NDK-ból

Szdani88xd

Letartóztatták a magyar útlevelet vevő svájci robotot

Szdani88xd

Weekly poll: Which is the hottest smartphone of the week?

Xiaomi teases gaming product announcement on January 20

Szdani88na így mar kicsit érdekesebb az androidos konzol piacvezető gyártótól csak hamarabb vesznek az emberek mint az Ouyatol xd

Sony Xperia C3 and Xperia C3 Dual battery life

Bitcoin Dips Below $200, a Sign of Things to Come?

Earlier this week, the BTC to US dollar exchange rate dipped below $200, a level unprecedented since the value of the cryptocurrency rose past $1,000 as an effect of its popularity as a fast and cheap means of online fund exchange. By January 15, the BTC:USD exchange was at about $170, thereafter hovering to around $200.

This is about an 82% drop in value since the currency’s peak of $1,130 in 2014, and about 76% drop in value since the same time in January 2014, during which BTC was at $850. Just mid-Devember, Bitcoin was trading at about $350, however, which means the drop was not as drastic month-on-month.

Bitcoin is sensitive to both positive and negative news about cryptocurrencies, and this drop has coincided with the opening statements of the criminal case against Ross Ulbricht, the alleged founder behind dark website Silk Road. In the same case, Mt. Gox founder Mark Karpeles has been implicated as having possible links to being Silk Road’s “Dread Pirate Roberts”.

Such severe fluctuations in Bitcoin’s value have mired the image of the cryptocurrency as unstable, and therefore inadequate as a form of monetary exchange. While fluctuations have been used by speculators as a means to gain from any rise in value, a decline in the price of BTC often results in negative repercussions, not only for those who hold on to Bitcoin in the hopes of the value appreciating, but also for the community surrounding the cryptocurrency, too.

Feeling the crunch

Startups and individuals that have invested in the cryptocurrency are feeling the crunch, however. Bitcon mining startup CEX.IO, for example, has temporarily suspended mining operations, citing that this is “the result of cloud mining costs exceeding mining profit.”

Bitcoin is not the only currency that is currently being hit hard with wide fluctuations. The Russian Ruble, for example, lost value in the wake of the Ukraine-related crisis, as well as embargoes imposed in relation to the issue. Even the Swiss Franc has sharply gained 20% in only a few days time due to the Swiss central bank’s lifting of the currency’s cap against the Euro.

This fluctuation has badly affected exporters and several hedge funds heavily invested into the Franc. Everest Capital’s Global Fund, which had about $830 million in assets as of end-2014, lost almost all its money with the Franc’s rise. FXCM reported having $225 million in “negative equity balances” from clients, necessitating a $300 million emergency loan to cover its losses. And this is all because of a supposedly stable currency suddenly gaining.

The future of Bitcoin

While the Bitcoin crash has spelled doom for some, others are unfazed. Jerry Brito has written on Wired, for example, that the price of Bitcoin does not matter at this time, as it is still in its early stages as a technology. He goes to cites parallels between Bitcoin and the Web, which suffered setbacks in its infancy, with the only difference being that Bitcoin comes with a dollar value attached to it. With a longer time-frame to consider, he says, short-term rallies and crashes should not mar Bitcoin’s potential as a technology, not just as a cryptocurrency.

For some, the inherent value of Bitcoin is not its tradable dollar value, but as a fast and inexpensive means of exchange. NewsBTC‘s Trevor Alpeter, for instance, has concerns with the price drops in the short term. However, he cites the possibility of better regulatory environment and support for Bitcoin, as well as an better acceptance among the venture capital community as potential drivers for growth in the long term. It’s the underlying technology that will ultimately spell long-term success for the cryptocurrency.

This has been demonstrated with how some startups have experimented with sidechains, considered to be a protocol for powering next-generation Internet services, and potentially unifying alternative cryptocurrencies, the so-called alt-coins.

Is this a sign of things to come for both financial markets and the cryptocurrency community? Perhaps these are unexpected shocks, but, at least for Bitcoin, there is hope that things will normalize in the end.

The post Bitcoin Dips Below $200, a Sign of Things to Come? appeared first on VR World.